Written by: Senior QA Engineer

Mykhailo RalduhinPosted: 30.04.2026

29 min read

Though invisible, embedded systems are all around us – from microwaves and washing machines to automotive braking control systems and fitness trackers. The global embedded systems market is projected to reach US$283.90 billion by 2034, with a CAGR of 4.77%.

Industrial control and automation dominate embedded system projects (29%), followed by IoT (24%), communications (21%), and automotive (19%). Other significant sectors include consumer electronics, medical devices, and AI-based applications.

As you see, embedded systems are widely used across domains where even a tiny mistake can cause safety hazards, financial losses, downtime, or regulatory issues. That's why efficient embedded testing is paramount for eliminating these serious troubles and ensuring high quality and reliability.

What is embedded system testing?

Embedded system testing validates the correct operation process that combines hardware and software to perform a specific function within larger mechanical or electrical systems.

The testing process covers both functional and non-functional aspects of embedded systems. While the former checks if a system operates flawlessly and as expected, the latter focuses on validating performance, security, reliability, compliance, etc.

Due to the unique nature of embedded systems, QA and software testing also take into account their key features – hardware-software integration, resource constraints, low power consumption, and real-time responsiveness.

Hardware-software integration: Embedded systems are defined by the tight coupling of software with specific hardware.

Even if software works well in a simulated or development environment, it might behave differently on actual hardware due to timing, pin-level dependencies, or hardware interrupts.

Any mismatch in communication between components can cause system failures.

Hardware-software integration testing is critical when it comes to industrial automation equipment, robotics, medical devices, etc.

Verifying correct software interaction with hardware drivers and peripherals

Ensuring proper execution of time-sensitive operations

Using hardware-in-the-loop (HIL) simulation to mimic real-world conditions

Hardware signals aren’t recognized by firmware

Malfunctioning peripheral initialization

Data corruption due to timing mismatches

Resource constraints: Embedded systems often run on constrained RAM, CPU, storage, or bandwidth.

Resource overflow can crash the entire system.

Inefficient use of memory or processing power leads to sluggish behavior or power drain, which is unacceptable for wearables, medical monitoring devices, smart appliances, etc.

Memory usage analysis

CPU load profiling under different workloads

Code footprint and optimization checks

Static code analysis for memory misuse detection

Unoptimized loops or function calls

Memory fragmentation

Inadequate buffer sizes

Low power consumption: Embedded systems are optimized for energy efficiency, making them ideal for battery-powered or energy-critical devices.

Power-hungry systems reduce product usability and fail to meet battery-life promises.

Devices must enter and exit low-power modes reliably.

Measuring power consumption in various states: active, idle, sleep

Verifying correct transitions between power modes

Simulating worst-case energy scenarios

High idle power draw

Missed sleep mode transitions

Unnecessary peripheral activation

Real-time operation: Most embedded systems must respond to events or inputs within strict deadlines.

A correct but late response can be catastrophic in critical systems like automotive braking systems or industrial robots.

Response time under load

Interrupt latency and priority handling

Deadline adherence and jitter

Task starvation or priority inversion

Slow ISR handling

Scheduling conflicts in RTOS environments

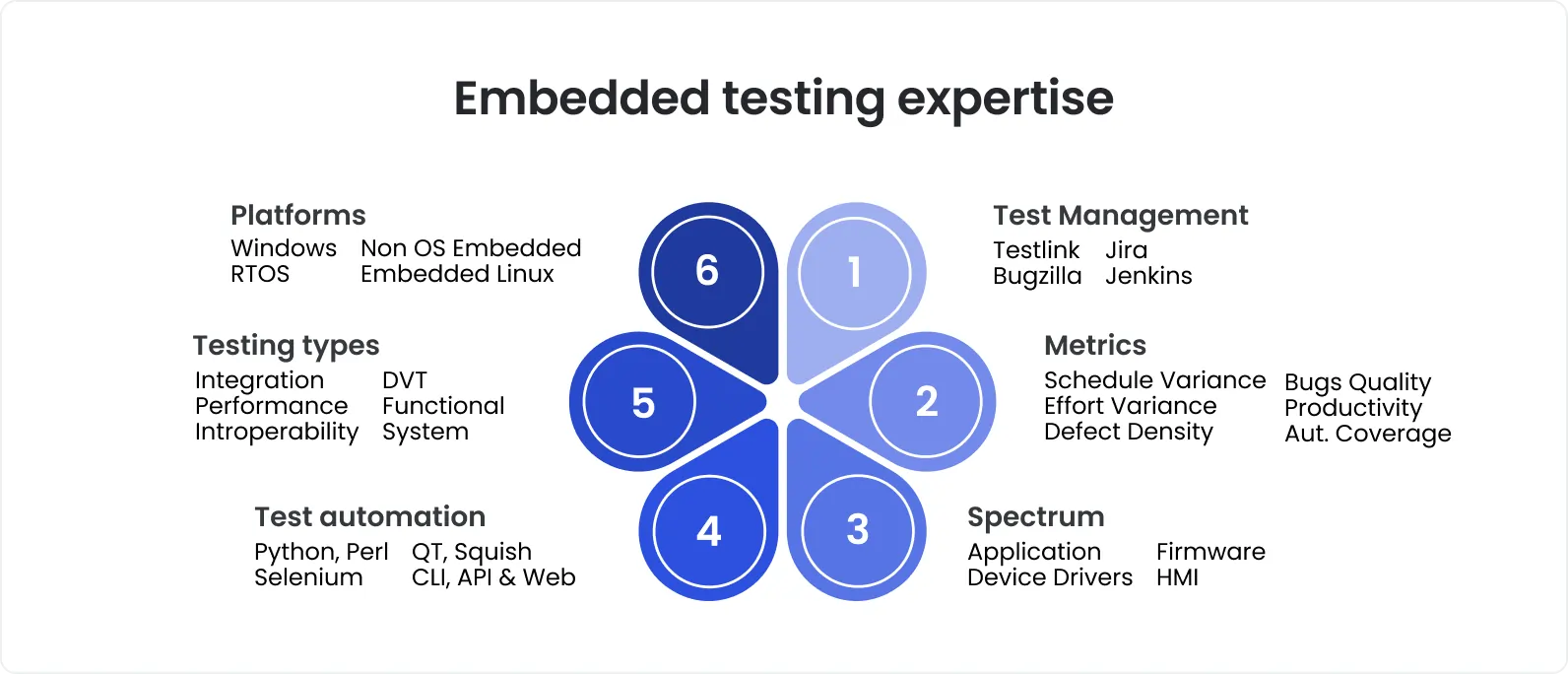

Testing embedded software is a far cry from testing general-purpose software. It requires specialized skills, deep technical expertise, and dedicated tools, all of which QA outsourcing brings to the table.

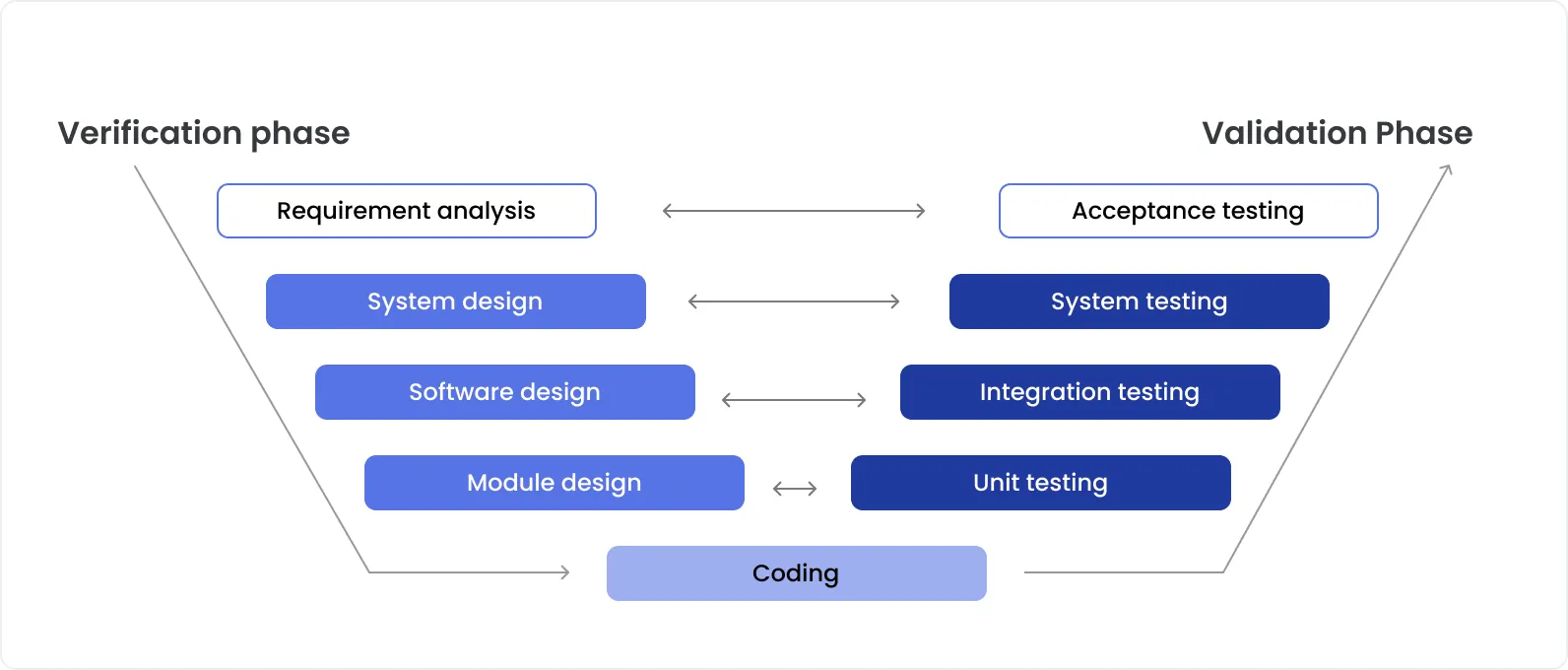

Testing levels relevant for embedded systems

Testing for embedded systems requires a layered, comprehensive approach that checks not only software functionality but also its interaction with hardware components under real-world constraints. Thorough embedded system validation suggests executing tests at well-known levels – unit, integration, and system.

Unit testing

Unit testing verifies the smallest testable components of embedded software – usually C/C++ functions, classes, or modules. To ensure proper testing, these units are isolated by abstracting dependencies on hardware behind interfaces or by using mock objects or stubs.

Let’s say firmware for a battery management system is developed. In this case, a unit test might check a function of the remaining charge calculation based on voltage levels. Instead of using a real voltage input, a QA engineer would leverage a stub function returning predefined values to validate logic in isolation before using physical hardware.

What tools can be used for embedded unit testing? Two of the most widely adopted frameworks are Unity and CppUTest.

Integration testing

Integration testing checks interactions between modules of embedded software and between software and hardware. This is of utter importance because the work of embedded systems depends not just on software logic, but also on the well-synchronized interactions with hardware components.

In embedded environments, integration testing has unique complexities, including the following:

Timing and synchronization

Embedded systems often work under real-time constraints. In other words, interactions must occur within strict time frames. During integration testing, artificial test environments might mask these issues, leading to surprises later during real-world operation.

Hardware dependencies

A software module might function perfectly when tested against a simulated peripheral but fail when integrated with the real hardware due to differences in timing, voltage levels, or protocol quirks.

Limited observability

Embedded systems have restricted output capabilities, because of which it’s difficult to monitor internal states without introducing intrusive debugging tools that themselves can alter system behavior. Resource constraints can also cause integration failures not evident during isolated module tests.

System-level component testing

System-level component testing validates major subsystems on their own before they are tied into the full system. The objective is to make sure each subsystem works exactly as needed so that when everything is integrated together later, problems do not snowball.

The recipe for effective system-level component testing is the isolation of the subsystem under test from the noise of the rest of the system. For this, we use mock interfaces, harnesses, or test boards that take full control over inputs and monitor outputs.

Another important aspect of system-level component testing is the right balance between simulation and physical testing. You have to do both, knowing when to lean more toward one or the other. Simulation works well in the early phases when hardware is unavailable yet or when running extensive tests on physical hardware would be too risky or time-consuming.

But simulation alone is never enough. Testing on physical hardware catches things simulations miss – things like connector tolerances, EMI interference, thermal coupling effects, sensor noise, and real-world wear and tear.

We usually aim for simulation to handle about 70-80% of the verification early on, giving us speed and broad coverage. Physical testing kicks in when hardware is stable enough to trust. It focuses on real-world behavior, stress testing, and fine-tuning.

Full system integration testing

Full system integration testing suggests verifying the combination of all subsystems previously tested in isolation to make sure the system as a whole works seamlessly under real-world conditions.

For example, testing a wearable device would include simulating a user powering on the device, connecting to a mobile app, reading sensor data, sending that data over Bluetooth, and handling incoming notifications. It’s important to check the work of the system not only under ideal conditions but also under edge cases and stress situations. The system might be run at low battery, with a weak wireless signal, and while performing CPU-intensive tasks.

One of the key challenges of full system integration testing is managing complexity. Now, you handle dozens of inputs and outputs of multiple subsystems. Tracing failures becomes difficult because symptoms might show up far away from the root cause. A brief brownout in the power rail could corrupt memory, and the consequences become visible hours later when corrupted data is finally accessed. To tackle this, detailed logging, event tracing, and error detection and reporting mechanisms inside the system are used.

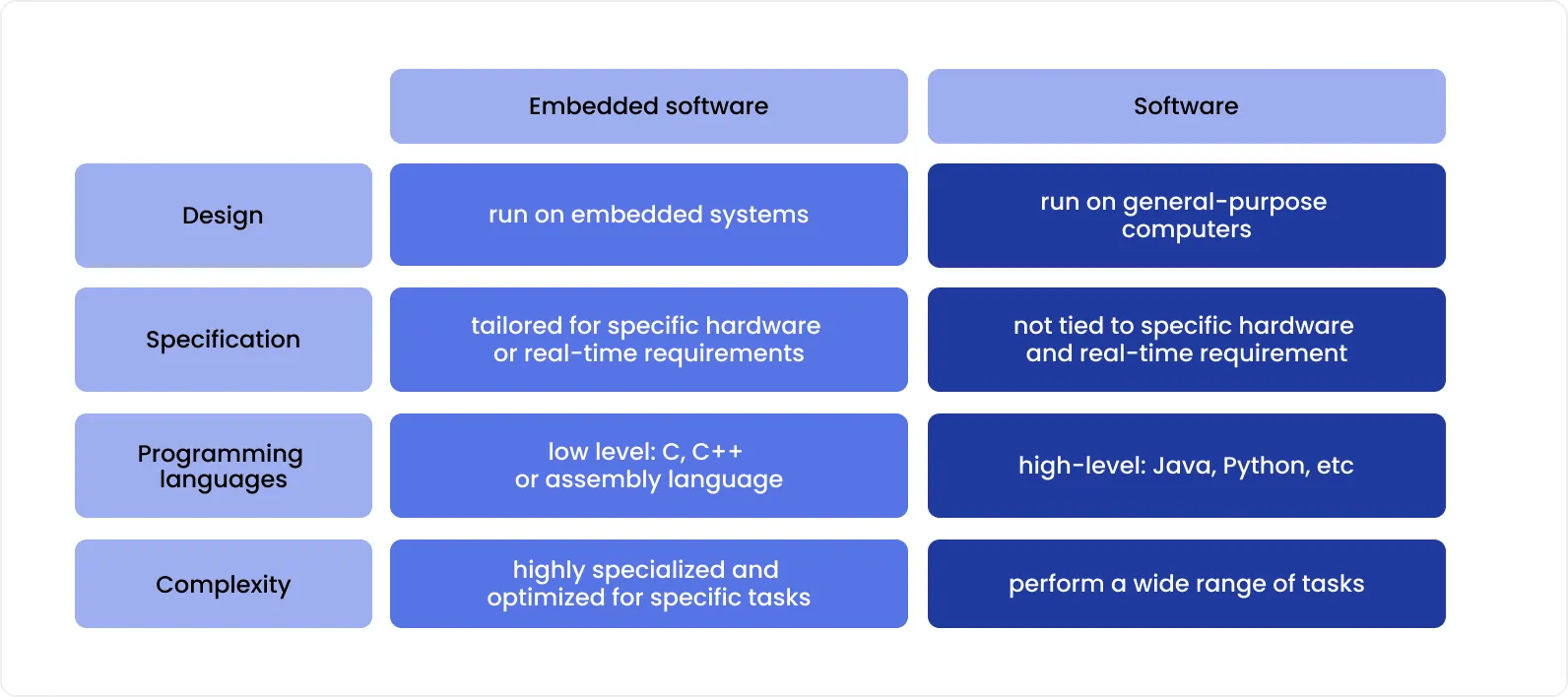

Embedded testing vs. general-purpose software testing

Embedded software and general-purpose software represent two distinct domains in the software development landscape, each requiring specialized testing approaches. While both aim to deliver reliable, functional software, the fundamental differences in their environments, constraints, and purposes create unique testing challenges.

While sharing the same fundamental QA principles and following the same goal, embedded system testing and general-purpose software testing differ significantly in techniques, approaches, risks, challenges, complexity, automation level, and other nuances. The table below demonstrates the differences you need to know.

Scope of testing

Testing both software and hardware to ensure their smooth integration.

Focusing solely on software applications.

Hardware dependency

High. Physical components like sensors, actuators, and microcontrollers are involved.

Low. Usually, testing is independent of specific hardware.

Environment

Tests are executed on target devices or simulators.

Tests are usually executed in software-based or cloud environments.

Tools

Specialized tools like oscilloscopes, HIL simulators, emulators, and debuggers are leveraged.

Standard software testing tools (e.g., Selenium, Postman, JUnit) are used.

Complexity

Testing is more complex due to hardware-software integration and real-time constraints.

Testing is simpler, as it covers software logic only.

Automation

Automation is more challenging because of the dependency on hardware. Yet, embedded software testing tools, HIL testing, SIL, and MiL simulations facilitate automated testing.

There is high potential for automation.

Cost and time

It’s often more time-consuming and costly because of manual and hardware testing.

It can be less costly and faster thanks to automation.

Failure Impact

High – failures can lead to hardware malfunction or safety risks.

Usually, low — issues may degrade user experience but are not life-threatening.

Debugging approach

Debugging is often done via hardware interfaces and requires device-level insight.

Debugging is usually done through logs, IDEs, and remote debugging.

As you can see, embedded system testing is more comprehensive, covering both hardware and software. It is also more complex, dependent on hardware, and often more costly due to physical hardware involvement.

Dedicated QA team that specializes in general-purpose software testing only lack the necessary expertise to deliver quality testing services for embedded systems. We strongly recommend bringing in professionals with hands-on experience in embedded testing. That’s paramount as the stakes are high.

Step-by-step guide to embedded testing

Embedded testing needs to be carefully structured and well-organized. Therefore, at a high level, we can break it down into four phases:

1. Laying the right foundation in the planning phase

Before springing to active testing, we define what success looks like exactly. We collaborate with all the stakeholders to gather available system requirements, including functionality, acceptable failure modes, performance thresholds it needs to meet, etc.

We outline clear testing goals, identify critical components, define possible risks, and map out where hardware meets software. From there, we create a suite of test cases that cover expected behavior, critical edge cases, and key integration points where problems are more likely to appear later.

2. Building the right environment in the setup phase

Once the plan is ready, we move to the environment setup. The right tool choice and proper configuration are half the battle. Depending on the system, this phase might include setting up a Hardware-in-the-Loop (HIL) environment and configuring power analyzers, oscilloscopes, test harnesses, or JTAG debug tools.

Rich experience lets us clearly understand when it makes sense to build a full test rig and when a software simulation can save time and effort while still delivering reliable results.

3. Challenging the system in the test execution phase

Repeatability and traceability are at the core of our testing approach. That's why each test run contains logs, timestamps, conditions, and results. Our QA engineers log the environmental conditions alongside test outcomes.

Tests, especially repeatable ones, are automated whenever feasible and relevant. Real-time dashboards demonstrate pass/fail trends, helping us spot unusual behaviors early before they require costly rework.

4. Gaining insights in the analysis phase

When the test run is finished, we study logs, detect anomalies, correlate them with test conditions, and conduct root cause analysis.

When reporting issues, we also suggest practical fixes or design adjustments based on patterns we’ve seen in other projects.

Tests critical for embedded systems

Now that you’ve got the big picture, we offer to take a closer look at the types of tests essential for embedded systems and the tools that facilitate their execution.

Functional testing

Let's start with functional testing that verifies if all the components and features of embedded systems work as they are supposed to, i.e., as specified by functional requirements.

Tools to use:

Robot Framework is a tool you need for high-level functional and system testing of embedded devices. It automates complete device workflows, simulates user actions, and verifies system responses.

Pytest is used in combination with custom libraries for writing flexible functional test suites around hardware interfaces.

Vector CANoe/CANalyzer is a good choice for functional and communication testing.

Interface testing

The fact that embedded systems often interact with external devices or other subsystems necessitates thorough testing of the interfaces between components. This involves testing communication protocols and interfaces like serial links, wireless connections, Ethernet, or other networking protocols.

Tools to use:

Bus Hound and Saleae Logic Analyzer are leveraged to analyze communication protocols like SPI, I2C, or UART.

Wireshark is a good option for testing Ethernet or wireless communication protocols.

CANoe is used for CAN bus testing and simulation.

Real-time testing

Embedded systems often require real-time operation, due to which timing is critical. Real-time testing ensures that the system meets its timing constraints and responds within the stated time limits.

Tools to use:

Tracealyzer helps with analyzing real-time system performance and tracing software behavior.

JTAG debuggers enable timing and latency analysis of embedded systems.

RTOS tools trace functionality for real-time task scheduling analysis.

Power and energy consumption testing

As mentioned above, power consumption is one of the key concerns for many embedded systems. Testing of that kind lets engineers understand if a system operates within acceptable limits.

Tools to use:

Keysight Power Analyzer or Tektronix Power Analyzer is used to measure current and voltage across components.

Monsoon Power Monitor is suitable for testing low-power devices in real-world scenarios.

Battery simulators help to test power behavior under different charging and usage conditions.

Stress testing and load testing

Performance testing checks how the system works beyond its normal operating conditions by exposing it to extreme stress.

Tools to use:

LoadRunner and JMeter simulate high traffic and network load.

IxChariot is used for network stress testing in embedded systems that rely on Ethernet or wireless communication.

Environmental testing

Far too often, embedded systems are used in particularly harsh environments, so it’s paramount to ensure their flawless operation under different environmental conditions.

Tools to use:

Thermal chambers are leveraged for high- and low-temperature testing.

Vibration testers, like IMV or ESPEC, simulate mechanical shock and vibration.

EMC/EMI testing equipment, like Rohde & Schwarz, enables electromagnetic interference and compatibility testing.

Security testing

Security testing is increasingly important in embedded systems, especially those connected to networks. It’s indeed essential to check the system for security vulnerabilities to ensure its resistance to possible attacks.

Tools to use:

Wireshark and Burp Suite are perfect for network security testing and vulnerability scanning.

Coverity and Klocwork are static analysis tools that identify code vulnerabilities.

AFL and Peach Fuzzer are fuzzing tools used to test for unexpected behavior or crashes in case of unusual input.

Compliance standards in embedded testing: ISO 26262, DO-178C, IEC 62304, and IEC 61508

Embedded systems in safety-critical industries don't just need to work — they need to be provably safe according to standards that carry legal and regulatory weight. Failing to address compliance during the testing phase creates rework, delays certification, and in some industries, blocks market entry entirely.

Below is a practical overview of the four standards most relevant to embedded system testing projects, what they require from QA teams, and what evidence they demand.

ISO 26262 — Functional Safety for Road Vehicles

Scope: Automotive electrical and electronic systems, including ECUs, ADAS components, powertrain controllers, and body electronics.

ISO 26262 defines the Automotive Safety Integrity Level (ASIL) framework, ranging from ASIL A (lowest risk) to ASIL D (highest risk, e.g., brake-by-wire, steering control). The safety integrity level assigned to a system directly determines the rigor of testing required.

What it requires from testing:

Requirements-based testing at unit, integration, and system levels — tests must trace directly to defined safety requirements

Structural coverage at the MC/DC (Modified Condition/Decision Coverage) level for ASIL C and D; statement and branch coverage for lower levels

Fault injection testing to verify that safety mechanisms detect and respond correctly to defined failure modes

Back-to-back testing to confirm that production code (generated or hand-written) behaves identically to the reference model it was derived from — this is where SIL and PIL testing become mandatory activities, not optional ones

Regression testing after every change to safety-relevant code, with documented traceability

Key tools used in ISO 26262 projects: VectorCAST (unit testing + coverage), LDRA Testbed, Polyspace (formal verification + code analysis), dSPACE HIL platforms, and MATLAB/Simulink with Embedded Coder for model-based development workflows.

DO-178C — Software Considerations in Airborne Systems and Equipment

Scope: Avionics software in aircraft and airborne systems — flight management systems, engine control units, autopilot software, navigation systems.

DO-178C defines Design Assurance Levels (DAL) from Level E (no safety effect) to Level A (catastrophic failure consequence). Level A software — software whose failure could cause loss of the aircraft — requires the most rigorous testing regime in any commercial software standard.

What it requires from testing:

100% statement, decision, and MC/DC coverage for Level A software — every executable line, every conditional branch, and every independent condition affecting a decision must be exercised and documented

Requirements-based test cases with full bidirectional traceability — from high-level requirements down to source code, and back up

Robustness testing to verify correct behavior on abnormal inputs, boundary values, and out-of-range conditions

Structural coverage analysis using qualified tools — the tools themselves must be certified under DO-330 (Tool Qualification) if they are used to satisfy DO-178C objectives

Independence — testing activities must be performed independently from development, and verification must be reviewed by a Designated Engineering Representative (DER) or equivalent authority

Key tools used in DO-178C projects: LDRA TBvision, VectorCAST with DO-178C workflow support, Parasoft C/C++test, and Rational DOORS for requirements traceability.

IEC 62304 — Medical Device Software Lifecycle Processes

Scope: Software that is part of a medical device or that is itself a medical device, subject to FDA (US) and MDR (EU) regulatory oversight.

IEC 62304 classifies software into three safety classes based on the severity of potential harm to the patient if the software fails:

Class A: No injury or damage to health possible

Class B: Non-serious injury possible

Class C: Death or serious injury possible

What it requires from testing:

Unit, integration, and system testing with documented test plans, test cases, and test results — all retained as objective evidence for regulatory submission

Anomaly resolution — every defect found during testing must be logged, evaluated, and formally resolved or risk-accepted before release

Regression testing following any software change, with impact analysis demonstrating which tests must be re-run

Software of Unknown Provenance (SOUP) testing — if the embedded software uses third-party libraries or open-source components, their behavior under intended use conditions must be verified

For Class C software, structural coverage metrics are typically expected by FDA reviewers and EU notified bodies as evidence of testing thoroughness

Key tools used in IEC 62304 projects: Jama Connect and IBM DOORS for requirements traceability, VectorCAST and LDRA for coverage, Coverity and Klocwork for static analysis, and JIRA or Polarion for anomaly tracking.

IEC 61508 — Functional Safety of Electrical / Electronic / Programmable Electronic Safety-Related Systems

Scope: Industrial safety systems — programmable logic controllers (PLCs), safety instrumented systems (SIS), industrial robots, process control equipment. It is also the parent standard from which ISO 26262 and IEC 62304 are derived.

IEC 61508 defines Safety Integrity Levels (SIL 1 through SIL 4). SIL 4 is reserved for the highest-consequence applications, such as nuclear safety systems and large-scale chemical plant controls.

What it requires from testing:

Black box and white box testing at software unit, integration, and system levels, with techniques required proportional to the SIL level

Failure mode analysis — testing must demonstrate that the software correctly handles the failure modes identified in the FMEA/FMEDA (Failure Mode Effects and Diagnostic Analysis)

Code coverage requirements escalate with SIL level — SIL 3 and 4 require MC/DC coverage

Data and control flow analysis as a recommended technique for SIL 2 and above

Formal methods (mathematical proof of software properties) as a highly recommended technique for SIL 3 and SIL 4

Embedded testing in CI/CD pipelines

Continuous integration and continuous delivery transformed software testing in the web and mobile world. In embedded development, CI/CD adoption has been slower — understandably so, given hardware dependencies, proprietary toolchains, and the compliance documentation requirements discussed above. But the gap is closing, and teams that build CI/CD pipelines for embedded projects gain a measurable advantage in defect detection speed and release confidence.

What a CI/CD pipeline looks like for embedded systems

An embedded CI/CD pipeline doesn't look identical to a web application pipeline, but the underlying principle is the same: every code change triggers an automated sequence of build, analyze, and test steps, with results reported before the change is merged.

A practical embedded CI/CD pipeline typically includes the following stages:

Stage 1 — Static analysis. Triggered on every commit. Tools like Polyspace, Klocwork, or Coverity run automatically and flag MISRA violations, potential runtime errors, and security vulnerabilities. This stage has no hardware dependency and runs in seconds to minutes.

Stage 2 — Host-based unit testing. The firmware modules with abstracted hardware dependencies run on the build server using frameworks like Ceedling, GoogleTest, or Unity. Coverage reports are generated automatically. This stage is fast, scalable, and gives developers immediate feedback on logic correctness.

Stage 3 — SIL regression (for model-based projects). If the project uses model-based development, a SIL test suite runs automatically against the generated code to confirm that algorithmic behavior matches the validated model.

Stage 4 — HIL regression (on-demand or nightly). HIL test execution is slower and hardware-constrained, so it typically runs on a scheduled basis (nightly or per release candidate) rather than per commit. Automated HIL test scripts exercise the most critical system behaviors, with results logged against the build that triggered them.

Stage 5 — Deployment to target (for mature projects). In some embedded CI/CD setups, a passing HIL run triggers automatic flashing of the firmware to a test target and execution of a smoke test suite — confirming that the build boots, initializes correctly, and passes a set of basic functional checks.

Overcoming common challenges of embedded testing

It’s time to talk about less pleasant but still important things – the challenges that inevitably come up during embedded testing.

Limited hardware access

One of the biggest hurdles, especially in the early stages of embedded system testing, is the lack of access to real hardware. It may still be under design, stuck in procurement, or limited to just a couple of prototype units. As a result, testing may grind to a halt because there’s nothing physical to test it on.

To avoid delays, we make active use of HIL testing, hardware simulators, and emulators that let us start validating business logic, communication flows, and peripheral behavior long before hardware is available.

Constrained resources

Embedded systems usually operate in severe conditions. Whether it’s a medical sensor or a control module in an electric vehicle, QA engineers often deal with tight CPU budgets, kilobytes of RAM, and strict power constraints. Writing and running full-scale tests directly on the device can easily interfere with system performance or, even worse, make it unusable during testing.

At DeviQA, we reduce on-target test overhead by keeping embedded test logic ultra-light and offloading as much testing as possible to the host. Using unit testing frameworks like Ceedling and GoogleTest, we isolate and validate critical functions on a desktop or CI server, saving device resources.

Difficult defect reproduction

In embedded systems, many bugs show up only under very specific timing conditions, power fluctuations, or environmental triggers, making it rather difficult to replicate bugs. It can take days to track down a communication failure that happens once every few thousand messages or a watchdog timeout caused by a rare interrupt collision, especially if the conditions can’t be reliably recreated.

To handle this efficiently, our team always builds in detailed logging and diagnostics from day one. Also, we use trace buffers, real-time monitoring, fault injection, and HIL testing to quickly capture and reproduce elusive bugs.

Specialized knowledge requirements

Embedded QA requires a deep understanding of software and hardware. Test engineers need to know their way around memory-mapped registers, real-time constraints, peripheral behavior, and many more. This unique skill set isn’t always easy to find or scale within teams.

Fortunately, outsourcing provides a good solution. Partnering with experienced providers like DeviQA allows businesses to quickly onboard QA specialists who are well-versed in embedded systems testing. Whether through an outstaffing model or a dedicated QA team, we help our customers ensure the outstanding quality of their embedded systems.

Embedded testing automation: What can (and can't) be automated

Test automation promises speed, repeatability, and coverage. In most software domains, those promises are easy to deliver on. In embedded testing, the reality is more nuanced, and a testing strategy built on unrealistic automation expectations will fail.

Here's an honest breakdown of where automation delivers genuine value in embedded testing, and where it hits hard limits.

What can be automated

Unit and integration tests on the host. Anything that can be abstracted from physical hardware is an excellent automation candidate. Using frameworks like Ceedling, GoogleTest, or Unity, test suites for firmware modules can run automatically on a CI server with every code commit. This is where embedded automation pays off most reliably, fast feedback, no hardware dependency, high repeatability.

SIL regression testing. Model-based SIL test suites can be fully automated and integrated into nightly build pipelines. A change to the control algorithm triggers an automatic re-run of the full SIL suite, with pass/fail results logged against the build. This significantly reduces the manual overhead of regression validation in model-based development workflows.

HIL test execution. Once the HIL rig is set up and calibrated, individual test scenarios can be scripted and executed automatically. Tools like National Instruments TestStand, Vector CANoe's automation APIs, and dSPACE ControlDesk allow test engineers to define stimulus sequences, capture responses, and evaluate pass/fail criteria without manual intervention during execution.

Static analysis and code coverage. Tools like Polyspace, Klocwork, and Coverity run automatically as part of a CI pipeline. Every build can produce a static analysis report flagging MISRA violations, potential null pointer dereferences, buffer overruns, and unreachable code, without a human reviewing source code line by line.

Communication protocol testing. Automated scripts using Vector CANoe or CANalyzer can repeatedly inject messages on CAN, LIN, Ethernet, or other buses and verify expected responses, including boundary conditions and error frames, far faster and more consistently than manual engineers.

What cannot be automated

Environmental testing. Temperature cycling, vibration, humidity exposure, and EMC/EMI testing require physical test chambers and specialized equipment. The execution itself is partially manual, and the interpretation of results frequently requires engineering judgment that automated pass/fail logic cannot reliably replace.

Initial hardware bring-up. The first time firmware runs on new silicon, unexpected behavior is the norm. Oscilloscopes, logic analyzers, and JTAG debuggers need to be operated by engineers who can observe unexpected signals, form hypotheses, and probe accordingly. This is diagnostic and exploratory work, automation has no role here.

Novel failure mode investigation. When an elusive bug surfaces only under rare timing conditions or specific environmental triggers, reproducing and isolating it requires skilled human judgment. Automation can assist with logging and fault injection, but the investigative process is inherently manual.

First-run test case development on new hardware. Writing the first test cases for a new hardware platform requires understanding the hardware's actual behavior, which often deviates from the datasheet. Initial test development is manual work. Automation amplifies well-understood test cases, it doesn't create understanding.

Building an test automation strategy for embedded system that actually works

The most effective embedded automation strategies share a common principle: automate at the layer closest to software first, then extend toward hardware progressively as the platform stabilizes.

A practical approach:

Start with host-based unit test automation (Ceedling, GoogleTest), zero hardware dependency, immediate CI integration

Add automated SIL regression as soon as model-based code generation is in place

Introduce automated static analysis (Polyspace, Klocwork) into the build pipeline from day one

Build HIL automation scripts incrementally, starting with the highest-frequency regression scenarios

Keep environmental, exploratory, and bring-up testing manual with structured logging protocols

Already have automated tests that aren't delivering consistent results? That's usually a strategy problem before it's a tooling problem. Share your setup with our team, we'll tell you where the gaps are.

Book a QA consultation

Software-in-the-loop (SIL) and Processor-in-the-loop (PIL) testing

HIL testing gets a lot of attention in the embedded world — and rightly so. But before a project is ready for HIL, two intermediate simulation stages deserve equal consideration: Software-in-the-Loop (SIL) and Processor-in-the-Loop (PIL). Together, SIL, PIL, and HIL form a progressive validation ladder that lets teams uncover defects at the right stage, at the right cost.

Software-in-the-loop (SIL) testing

SIL testing runs the production software, typically auto-generated code from a model-based development environment like MATLAB/Simulink, on a host computer, entirely without target hardware. The software executes inside a simulation environment that mimics the behavior of the real system using virtual inputs and outputs.

The core purpose of SIL is to verify that the generated code behaves identically to the model it was derived from. If a control algorithm was designed and validated at the model level, SIL confirms that the transition to actual C/C++ code hasn't introduced numerical errors, rounding issues, or logic mismatches.

When SIL is the right choice:

Target hardware is unavailable or still in design

The team needs to validate auto-generated code before committing to hardware procurement

Regression testing is needed at scale, and running tests on physical hardware would be too slow or costly

Early-phase algorithm validation in automotive, aerospace, or industrial control applications

Tools commonly used:

MathWorks Simulink Test for model-based SIL execution

LDRA Testbed and Polyspace for code-level analysis alongside SIL runs

VectorCAST for automated SIL test case generation and coverage measurement

SIL limitations to plan around: SIL runs on the host processor, not the target processor. This means it reveals logic and algorithmic bugs but tells you nothing about real-time behavior, interrupt latency, or how the code performs under the actual CPU constraints of the target hardware. Those gaps are exactly what PIL addresses.

Processor-in-the-loop (PIL) testing

PIL moves execution one step closer to reality. The same production code now runs on the actual target processor, or a cycle-accurate emulator of it, while the test environment on the host computer provides stimuli and captures responses. Unlike SIL, PIL exposes timing behavior, processor-specific numerical precision, and the effects of fixed-point arithmetic on the actual target architecture.

The practical value of PIL lies in catching a specific category of bugs that SIL simply cannot surface: issues that emerge only because of the target processor's instruction set, pipeline behavior, memory alignment, or floating-to-fixed-point conversion. In safety-critical domains, these are exactly the bugs that fail certification audits and cause field failures.

When PIL is the right choice:

After SIL validation, before full HIL setup is available or justified

When the team needs to verify real-time execution timing on the target processor

For fixed-point implementation verification in automotive ECUs, medical device controllers, or industrial PLCs

When certifying code against ISO 26262 ASIL levels or DO-178C design assurance levels that require processor-specific evidence

Tools commonly used:

MathWorks Embedded Coder with PIL mode for Simulink-generated code

Green Hills MULTI and IAR Embedded Workbench for processor-level debugging during PIL runs

TRACE32 by Lauterbach for instruction-level tracing on target silicon

SIL, PIL, and HIL — Choosing the right stage

The three methods are not alternatives to each other, they are sequential layers, each validating a different dimension of system behavior.

SIL

Host PC

Code logic vs. model

Timing, processor behavior, hardware interaction

PIL

Target processor / emulator

Timing, fixed-point behavior, processor-specific issues

Real sensor/actuator interaction, physical environment

HIL

Target hardware + plant simulator

Full hardware-software interaction in real time

True physical environment (temperature, EMI, vibration)

Not sure which validation stages your project actually needs? SIL, PIL, and HIL each add cost and time, and not every project requires all three. Talk to a DeviQA engineer about scoping the right simulation strategy for your hardware timeline and compliance obligations.

Wrap-up

Embedded QA is a demanding discipline. The testing process is both time- and effort-intensive, while a single overlooked error can cost a human life since embedded systems are often integral to medical, automotive, defense, and aerospace equipment.

Such peculiarities of embedded systems as dependency on hardware, constrained resources, low power consumption, and real-time operation impose additional challenges for QA engineers. Therefore, testing embedded software differs significantly from testing common software. It requires specialized expertise in embedded software testing techniques, software-hardware integration, HIL testing, and many more.

To gain the required skills, hands-on experience is needed. At DevQA, we have it and are always ready to share our knowledge and expertise to help our customers ensure the outstanding quality of their embedded systems.

About the author

Senior QA engineer

Mykhailo Ralduhin is a Senior QA Engineer at DeviQA, specializing in building stable, well-structured testing processes for complex software products.