Written by: Senior QA Engineer

Mykhailo RalduhinPosted: 16.04.2026

14 min read

Worsening health disparities, diagnostic inaccuracies, inappropriate medical decisions, and unequal access to care for minority groups are common consequences of AI bias in healthcare. A striking example is the widely used health care risk algorithm that systematically underestimated the needs of Black patients, reducing their access to extra care programs.

Yet the growing adoption of AI is undeniable: as of September 2025, the list of FDA-authorized AI-enabled medical devices includes 1,247 entries. This fast expansion shows that the problem of medical bias isn’t inevitable – it can be identified and prevented.

How? Bias testing, done as part of medical QA testing, can catch issues before they reach patients. Apart from helping meet compliance demands, it ensures patient safety, supports ethical practice, protects business, and mitigates reputational risks.

Properly designed and executed bias testing differentiates AI that reinforces inequities from AI that strengthens care delivery for everyone, regardless of gender, age, skin tone, or social background.

Reliable testing for patient-facing and clinical healthcare software

How biases enter healthcare AI

While dangerous, AI biases in healthcare are not always visible and are rarely intentional. Unlike a coding error that can be spotted and corrected, bias often seeps in quietly across the entire AI lifecycle, from the data collected to the way systems are deployed in real-world hospitals.

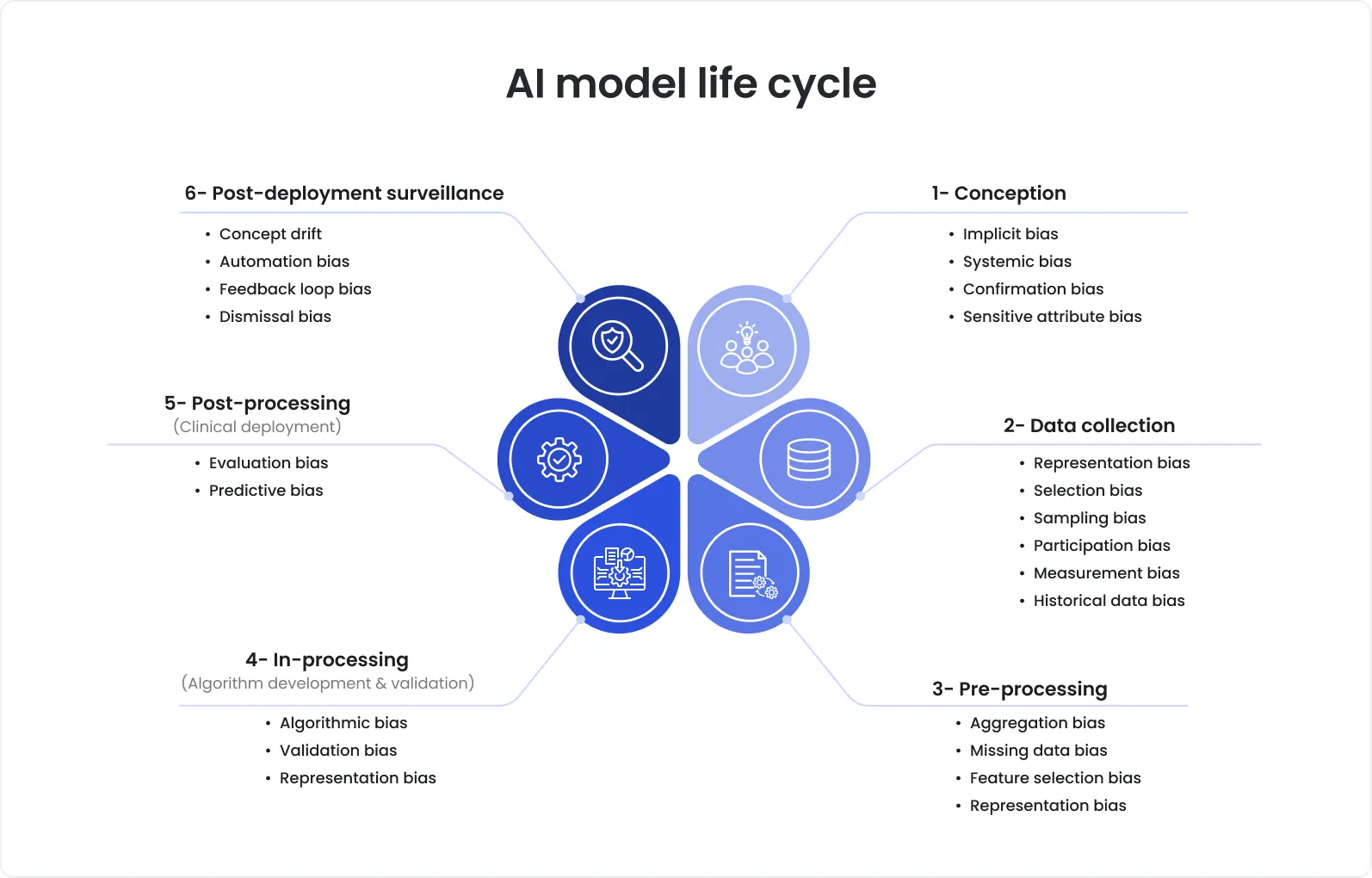

Common biases across the phases of the AI model life cycle

With regard to this, the FDA issued the draft guidance document that offers detailed recommendations on how to approach transparency and bias throughout the total product lifecycle.

Yet, let’s talk in detail about the main ways bias takes root.

Data biases

Many issues with AI in healthcare are related to data because an AI model is only as fair as the data it learns from. If the training data underrepresents certain populations by race, gender, age, or socioeconomic background, a model inherits these blind spots. Historical health records, often used for training, can also reflect existing disparities: fewer referrals for Black patients, underdiagnosis of women’s pain, or unequal access to advanced imaging. When these records are fed into AI without correction, the inequities are amplified.

Data bias can appear through:

Sampling bias: datasets drawn from one demographic group (e.g., predominantly white patients in U.S. hospital systems).

Measurement bias: inconsistencies in how medical conditions are recorded (e.g., subjective pain scores).

Historical bias: past systemic inequalities baked into electronic health records.

Real-world example: An UK report points out that AI-based medical devices can worsen existing healthcare disparities. These devices may contribute to the underdiagnosis of cardiac conditions in women, produce inequities based on patients’ socioeconomic status, and fail to detect skin cancers in people with darker skin tones. The latter issue is related to the fact that many AI systems are trained predominantly on images of lighter skin.

Human biases

Another major source of bias in AI healthcare is humans. While rarely introduced deliberately, such biases reflect historic or prevalent assumptions, or preferences of developers, clinicians, and researchers.

For instance, clinicians who label X-rays may unconsciously reflect their own availability bias, a cognitive error where a diagnosis that comes most easily to mind is favored, often influenced by recent or striking cases. Similarly, developers may also unintentionally encode gendered or racialized assumptions into a model design.

Human bias can also show up in how problems are defined. For example, if a team decides that AI should predict the likelihood of hospital readmission rather than the likelihood of serious health complications, they’re favoring one definition of success over another, often prioritizing cost savings instead of care equity.

Real-world example: Findings of a recent study demonstrate that stigmatizing language (SL) written by clinicians adversely affects AI performance, particularly so for Black patients, highlighting SL as a source of racial disparity in AI model development.

Algorithmic biases

Algorithmic bias in healthcare is also the case. Not only data, but also algorithms themselves can create inequities. Such biases are introduced when model structures or optimization techniques unfairly favor certain groups over others. The most common issues include the following:

Overfitting to the major patient groups while neglecting the underrepresented ones.

Imbalanced loss functions, where errors on minority cases matter less statistically.

Proxy variables that are treated as sensitive attributes while not being labeled as such.

Real-world example: VBAC calculator included race-based correction factors that assigned lower success probabilities to African American and Hispanic women, discouraging VBAC attempts for these patient groups without any scientific justification.

Deployment and monitoring biases

Bias can creep in not only during development but even after deployment. Without ongoing monitoring in place, performance may silently degrade for certain groups. Common issues include:

Contextual mismatch: an AI model that has been trained in a tertiary care hospital may not be suitable for community clinics.

Feedback loop bias: AI recommendations have a great impact on clinician behavior, reinforcing the AI’s own predictions.

Model drift: patient demographics or disease patterns may change over time, but models remain unadjusted.

Real-world example: A 2025 study in Nature Communications showed that chest X-ray AI models became biased over time because they didn’t adapt to shifts in disease patterns. As a result, the models became less accurate for certain groups.

Traditional QA checks often focus only on functionality and overall performance. Spotting AI bias requires intentional, systematic evaluation at every development stage, and the knowledge of how bias can enter a system is of great help here.

Get an expert bias review of your healthcare AI

Unique challenges of bias testing in healthcare

As you might guess, carrying out bias testing in healthcare is a far cry from running usual accuracy checks. Internal development teams often face challenges that make it difficult to identify and address bias effectively. Here’s where they usually struggle, and why specialized QA makes the difference.

Limited representation in test data

As a rule, healthcare data doesn’t cover the full diversity of patients. Such important details as race, age, pregnancy status, disability, or language may be missing, poorly coded, or too rare to analyze. Besides, differences between hospitals, devices, or vendors can also affect results. A model might be accurate overall but still fail for specific patient groups.

How we handle it at DeviQA:

Building representation tables across clinical subgroups before any model scoring.

Setting a minimum sample size bar for each group and marking groups as ‘no claim’ if they don’t meet the bar.

Filling gaps through targeted data collection or carefully validated synthetic data.

Breaking out test results by device, vendor, and site so that measurement-driven differences don’t hide in the averages.

Proxy variables masking bias

Such inputs as ZIP code, total spend, or frequency of visits may look neutral but are tied closely to race, income, or access to care. If your target label reflects use of care, like cost or visit count, the model learns past access patterns instead of clinical need. This leads to unfair AI recommendations even when a race or income field isn’t included.

How we handle it at DeviQA:

Running proxy checks to see how strongly neutral at first sight fields link to sensitive attributes.

Carrying out what-if tests by changing a suspicious proxy while keeping the clinical data the same.

Removing or de-correlating the suspicious features and comparing the results before and after.

Rare but high-stakes edge cases

Children, pregnant women, people with several chronic conditions, and patients with rare diseases are hardly represented in most datasets. They’re not numerous but are under serious risk. Merging them into broader categories or adjusting thresholds around the “average” case can mask serious failures.

How we handle it at DeviQA:

Creating special edge-case test suites that cover rare patient groups.

Checking the real decision impact for each group, using net-benefit or decision-curve analysis, not just overall accuracy.

Adding guardrails like human sign-off, scoped indications, or exclusions until evidence is obvious.

Collecting more data to increase sample sizes so that results become statistically reliable.

No clear bias benchmarks

There isn’t one standard fairness score that everyone around the globe can use. Base rates vary across groups, and metrics can conflict. The right target depends on the decision context, be it screening, allocation of scarce resources, or risk communication. That’s why teams struggle to set clear test criteria.

How we handle it at DeviQA:

Matching metric selection to a written harm model (e.g., sensitivity parity for screening; PPV parity and calibration for allocation; calibration parity for counseling).

Defining acceptable subgroup deltas and confidence intervals at the operating threshold, not just across curves.

Capturing trade-offs and the ‘why’ in a test protocol that ships with the product as part of the evidence.

Revisiting thresholds as disease rates, resources, or policies change and making adjustments.

Regulation that moves faster than your release plan

Regulations for healthcare AI keep tightening. Things like update control, representative data, clear documentation, and ongoing real-world monitoring are changing across regions. Teams built around fixed models and one-time validations end up with gaps, especially on subgroup performance and post-launch monitoring.

How we handle it at DeviQA:

Aligning the lifecycle to current rules and embedding fairness checks as explicit release gates. Preparing audit-ready artifacts for every release, including test protocols, datasheets, model cards with subgroup tables, and change-impact results.

Regularly running ‘regulatory delta’ reviews and updating the evidence and monitoring plan whenever expectations shift.

Executing post-market testing and feeding its findings into change control and release governance to ensure the compliance of each update.

Know where your AI fails before regulators or clinicians do

Key methods of bias testing

AI challenges in healthcare often narrow down to biases, so professional QA providers like DeviQA prioritize bias testing, treating it not like a one-off check but a repeatable QA discipline with established methods. With this approach, bias testing isn’t abstract ethics but a concrete, repeatable quality assurance practice. Below are the key methods that help catch data, human, deployment, and algorithmic bias in AI.

Subgroup error rate analysis

Instead of only reporting overall accuracy, QA teams break down model performance across subgroups like race, age, sex, language preference, etc. This helps understand if certain patient groups are more likely to get misdiagnosed or misclassified because blind spots become visible and addressable.

How it works:

Check sensitivity, specificity, PPV/NPV, AUC, and calibration by race, age, language, gender, pregnancy status, disability, and by site and device/vendor.

Report confidence intervals so that small gaps don’t get hand-waved away.

Decide at the live threshold (where the model actually triggers), not just across curves.

Stratified test case design

Traditional test sets usually prioritize ‘average’ patients. Stratified design suggests creating test scenarios that include underrepresented or high-risk groups. This ensures that fairness checks aren’t just theoretical but are applied to realistic patient profiles that otherwise fall through the cracks.

How it works:

Create test sets that cover the population you serve.

Include intersections, not only single attributes.

Treat edge cohorts as first-class partitions.

Set a minimum sample size for each patient group.

Fairness metric benchmarking

To make bias measurable, QA experts use fairness metrics that set clear fairness thresholds and provide trackable QA outcomes.

How it works:

Pick fairness metrics that fit your decision and set clear limits.

Consider the following metrics:

Equal opportunity ensures patients with the same condition are equally likely to be correctly identified, regardless of a subgroup.

Demographic parity helps to understand if any group is systematically excluded from access.

Equalized odds help ensure fair treatment of diseased and healthy patients across demographics.

Predictive parity (calibration) lets teams understand if risk scores are the same for everyone.

Write down the thresholds you accept and the trade-offs you make.

Counterfactual testing

This method checks if AI model predictions change when sensitive attributes are changed, but medical details remain the same. For instance, when a patient’s clinical data is identical but the model gives different outcomes when ‘female’ is replaced with ‘male,’ it signals reliance on features that shouldn’t affect care decisions. Counterfactual testing is an efficient way to detect hidden dependencies.

How it works:

Create what-if cases by changing a suspected proxy or a permitted sensitive field while keeping clinical signals the same.

If the prediction swings without a clinical reason, you’ve found a bias to fix.

Confirm by removing or de-correlating the proxy and rechecking results.

Fairness regression testing in post-deployment monitoring

An unbiased at-launch model may drift over time as patient populations evolve and new devices are introduced. With fairness regression testing, bias checks turn into ongoing monitoring. This lets teams avoid silent degradation that could otherwise harm patients long after release.

How it works:

Track subgroup sensitivity, PPV, and calibration weekly by site and device.

Alert on divergence, not just on overall drops.

Run non-inferiority checks before every update.

Keep an evidence trail from alert to mitigation and re-test.

Best practices to start testing your healthcare AI system for biases

How to reduce bias in AI? Bias testing is one of the most effective measures that you can take. While it requires profound knowledge in quality assurance, AI, and healthcare, something can still be done internally. Here are some practices you can follow for a start:

Here are the best practices to follow:

Include fairness KPIs in project requirements. As well as accuracy or speed, fairness needs to be clearly defined. Set clear goals, such as requiring sensitivity within ±5% across racial or gender groups, or demanding that no subgroup falls below a specific threshold. Written in the requirements, fairness goals won't become afterthoughts.

Use diverse data across test environments. Relying on a single hospital or device vendor for testing often leads to blind spots. We recommend you test across data from multiple clinical settings and devices.

Build stratified test sets with edge cases. Your tests shouldn’t just reflect the majority population. Include subgroups that are often overlooked, for example, older patients with comorbidities or those with limited English proficiency. These cases often reveal weaknesses that averages hide.

Validate outputs across multiple patient groups. A generally accurate model may still fail for a specific subgroup. That’s why it’s vital to break down results by demographics, language, device type, and clinical context in order to define whether certain patients are at higher risk of misdiagnosis.

Schedule post-release bias audits. Models that seemed fair at rollout can quietly become biased over time due to changes in demographics, hospital coding practices, etc. Therefore, you’d better conduct post-release audits annually or quarterly to track subgroup metrics and flag drift early, before it affects patient outcomes.

The practices above can be used as a starting kit. However, if you need to move faster and stay confident in the reliability of your healthcare solution, specialized providers of software testing services for healthcare, like DeviQA, bring the needed expertise, facilities, and battle-tested strategies to let you meet your QA goals.

Final thought: Bias testing is non-negotiable for healthcare AI

If patient outcomes matter, skipping bias software testing isn’t an option. The cost always shows up later – in production failures, regulatory findings, or real harm.

Practical tips to get this right:

Test where decisions are made. Measure bias at the live operating threshold, not just across ROC curves. Fairness on paper means nothing if it breaks at decision time.

Break results down, always. Never ship only aggregate metrics. Review sensitivity, calibration, and error rates by race, age, sex, language, site, and device.

Treat rare patients as high-risk, not outliers. Small cohorts (children, pregnant patients, rare conditions) need explicit test coverage. Averages hide their failures.

Watch models after launch. Bias can emerge over time due to data drift, new devices, or changing populations. Fairness checks must continue post-deployment.

Document your trade-offs. Regulators don’t expect perfection. They expect evidence that you identified risks, measured them, and made informed decisions.

Healthcare AI earns trust only when it proves fairness under real conditions, not assumptions.

If you want bias testing embedded into your healthcare AI lifecycle as a repeatable QA process, talk to DeviQA before issues surface in production or patient outcomes.

Request an expert bias assessment for your AI system

About the author

Senior QA engineer

Mykhailo Ralduhin is a Senior QA Engineer at DeviQA, specializing in building stable, well-structured testing processes for complex software products.