Written by: Senior AQA Engineer

Ievgen IevdokymovPosted: 12.05.2026

22 min read

For QA leads, engineering managers, and CTOs who want compliance testing that survives an audit, not just a checklist that passes a sprint.

Here's an honest description of how compliance testing works at most fintech companies: someone runs a security scan before the big release. Legal signs off on a checklist. The team holds their breath and ships. And it works, until a regulator asks for evidence, an audit finds a gap, or a breach exposes a control that was documented but never actually tested.

That's not a compliance testing framework. That's a compliance testing ritual. The difference matters enormously in a sector where cumulative GDPR fines reached €5.88 billion by January 2025, DORA enforcement began in earnest, and PCI DSS v4.0 introduced more than 50 new requirements with hard enforcement deadlines.

This guide walks you through exactly how to build a fintech compliance testing framework that maps to your actual regulatory obligations, integrates into your delivery pipeline, and produces the kind of traceable, auditable evidence that regulators and external auditors accept. Six steps, with practical detail at each one.

What a compliance testing framework actually is (and what it's not)

Before we get into the steps, let's be precise about what you're building, because this terminology gets used loosely, and the loose version leads to loose coverage.

A compliance testing framework is a structured system that maps every regulatory obligation your product carries to a specific, maintained test case; assigns ownership for each domain; integrates testing into your delivery pipeline; and produces traceable documentation that an auditor can follow from a regulatory requirement to the test that validates it to the evidence that proves it ran.

It is not a compliance checklist. It is not a pre-release security scan. It is not a legal review. And it is definitely not a one-time project.

The reason this distinction matters for fintech specifically: your regulatory landscape isn't a single regulation. It's a layered, overlapping set of frameworks, PCI DSS for payment data, GDPR for personal data, PSD2 for payment services, DORA for operational resilience, SOX if you're a public company or a vendor to one, each with its own requirements, timelines, and penalty structures. A static checklist can't navigate that complexity. A living framework can.

A compliance testing framework is living infrastructure. It evolves when your product changes, when regulations update, and when new frameworks enter scope. If yours hasn't been updated since it was built, it's not a framework, it's documentation of what you used to comply with.

Step 1: Map your regulatory obligations before you write a single test case

This is the step most teams skip, and the reason their frameworks have invisible gaps. You can't build a fintech QA compliance framework without knowing precisely which regulations apply, which specific clauses within them create testing obligations, and where those frameworks overlap.

The overlap is where the efficiency lives. DORA and GDPR both require breach notification, DORA within 24 hours, GDPR within 72. A single, well-designed incident response test can satisfy both. Vendor risk management requirements appear across DORA, NIS2, GDPR, and EBA Guidelines with significant similarity. A unified third-party testing approach covers all four. Identify these overlaps early, and your framework will require far fewer total test cases than a framework built regulation by regulation.

How to run the regulatory mapping

Start with your data flows, what personal data do you collect, where does it go, who processes it, and where is it stored? This determines your GDPR scope. Every system that touches personal data is in scope; every third party that receives it needs a Data Processing Agreement.

Map every cardholder data touchpoint, any storage, transmission, or processing of card data puts you in PCI DSS scope. PCI DSS v4.0 is now the enforced standard, with Requirement 6.4.2 specifically mandating automated testing of public-facing web applications.

Determine jurisdiction and entity type, EU-regulated financial entities are in DORA scope from January 2025. Payment services fall under PSD2. Public companies and their service vendors face SOX obligations. International operations add further layers: CCPA for California users, and jurisdiction-specific banking regulations in each market you serve.

Document every overlap explicitly, for each regulatory requirement, note which other frameworks have equivalent or complementary requirements. This becomes the efficiency map for your test case library.

The output of this step is a regulatory obligation register: a document listing every framework in scope, the specific articles or requirements that apply to your product, and an initial owner. This is the master reference that every test case traces back to. Without it, you're building a test library with no anchor, and when regulations change, you won't know which tests need updating.

This is a joint exercise between QA, legal/compliance, and engineering. QA alone doesn't know which regulations apply. Legal alone doesn't know what's technically testable. The mapping requires both perspectives in the same room.

Step 2: Build your test case library, mapped to regulations, not features

Here's the structural shift that separates a compliance testing framework from a standard QA test suite: standard test cases answer 'does this feature work?' Compliance test cases answer 'does this product meet this specific regulatory requirement?'

When you organize by feature, your tests disappear when features are deprecated or refactored. When you organize by regulation, your tests persist regardless of how the underlying code changes, and when a regulation updates, you can immediately identify every test case affected.

What every compliance test case must contain

The regulatory clause it satisfies, PCI DSS Requirement 6.4.2, GDPR Article 17, DORA Article 25, PSD2 SCA requirements. Be specific.

The technical control being validated, not 'encryption is enabled' but 'AES-256 encryption is applied to all cardholder data at rest in the payment database.'

The expected evidence output, what does a passing test produce? A log entry, an encrypted field confirmation, a deletion record, an audit trail entry. Define this before the test is written.

The pass/fail criteria that an auditor would accept, not just 'the test passes in CI,' but the standard against which the result is evaluated.

The owner responsible for maintaining the test as the regulation evolves, an unowned test case is a test case that won't get updated when the rule changes.

The four compliance testing categories every fintech framework needs

Your test case library should cover four domains, and every test case should belong to exactly one primary domain, even if it satisfies requirements across multiple frameworks:

Security controls validation, encryption at rest and in transit, access control enforcement, vulnerability scan results, authentication security. Relevant to: PCI DSS, GDPR Article 32, DORA.

Data handling and privacy, consent management, data subject rights workflows (DSAR, erasure, portability), retention policy enforcement, test data governance in non-production environments. Relevant to: GDPR, CCPA.

Transaction and payment integrity, authentication flows, SCA compliance validation, transaction logging completeness, AML rule execution, KYC verification accuracy. Relevant to: PCI DSS, PSD2, AML regulations.

Operational resilience, incident detection and notification pipelines, backup and recovery validation, third-party integration security, failover behavior. Relevant to: DORA, PCI DSS, GDPR Article 33.

The overlap dividend makes this structurally efficient. A test that validates encryption of cardholder data satisfies PCI DSS Requirement 3. If that same test also validates encryption of personal data fields, it partially satisfies GDPR Article 32. Document this cross-framework mapping explicitly in the test case record, it reduces your total test count and makes audit evidence cleaner and more compelling.

Book a strategic QA consultation

Step 3: Integrate compliance tests into your CI/CD pipeline, not just your release checklist

If your compliance testing only runs at release, you're already behind. By the time a compliance-breaking change reaches a release gate, it may have been in the codebase for weeks, already deployed to staging environments, already visible in architecture reviews, already expensive to unwind.

CI/CD policy gates are the architectural shift that makes compliance testing automation work at fintech speed. They function as automated governance checkpoints embedded in the build pipeline, blocking non-compliant changes from reaching production before they can impact customers or regulatory posture.

What belongs in the CI/CD pipeline (every build)

SAST (Static Application Security Testing), scans code for insecure patterns mandated by PCI DSS Requirement 6.5 and your secure coding policy. Catches issues at the point of introduction, when they cost the least to fix.

DAST (Dynamic Application Security Testing), PCI DSS Requirement 6.4.2 specifically mandates automated testing of public-facing web applications. DAST runs against the deployed application and catches vulnerabilities that static analysis can't detect.

SCA (Software Composition Analysis), validates third-party library security. Both PCI DSS and DORA require that vulnerabilities in external components are tested for and patched. Given how heavily fintech applications depend on external libraries, unscanned dependencies are a persistent compliance gap.

Data handling checks, automated confirmation that personal data isn't landing in log files, test environment databases, API error responses, or unencrypted payloads. These are the smallest and most common GDPR violations in financial applications.

Compliance policy gates, binary checks that block deployment if a defined compliance control is absent or misconfigured. MFA removed from a critical authentication flow? Encryption disabled on a database? The build fails. No manual review required.

What runs on a scheduled cadence (not every build)

Full regulatory test suite, weekly or bi-weekly against a production-representative environment. This is the deep run: all test cases, full evidence collection, finding log updated.

Data retention enforcement validation, confirms scheduled deletion processes ran correctly at the intervals mandated by your documented retention policy.

Access control audit, confirms role-based access configurations match the documented policy. This drifts over time as teams change and permissions are informally adjusted.

Third-party integration compliance, DPA coverage verification, API security posture, sub-processor change review.

What stays manual (specialist-triggered)

Penetration testing, annually at minimum, or triggered by significant architecture changes. Required by PCI DSS and DORA; cannot be replaced by automated scanning.

Social engineering simulation, in scope for DORA Threat-Led Penetration Testing. Tests the human layer that automated tools can't reach.

DSAR response completeness testing, verifying that a data subject access request returns all personal data from all systems requires cross-system manual validation that no automation currently handles reliably.

Audit trail quality review, confirming that log content is audit-acceptable, not just that logs exist.

The rule: automate the high-frequency, high-confidence tests. Reserve human time for high-judgment, adversarial, and cross-system tests that automation can't replicate. Both are required, the question is which type serves each testing objective.

Step 4: Define ownership, compliance testing needs a responsible team, not a committee

The most common reason fintech compliance testing frameworks fail in practice isn't tooling, coverage, or budget. It's ownership. When everyone is responsible for compliance testing, no one is, and regulatory changes fall through the gap between teams who each assumed someone else was tracking it.

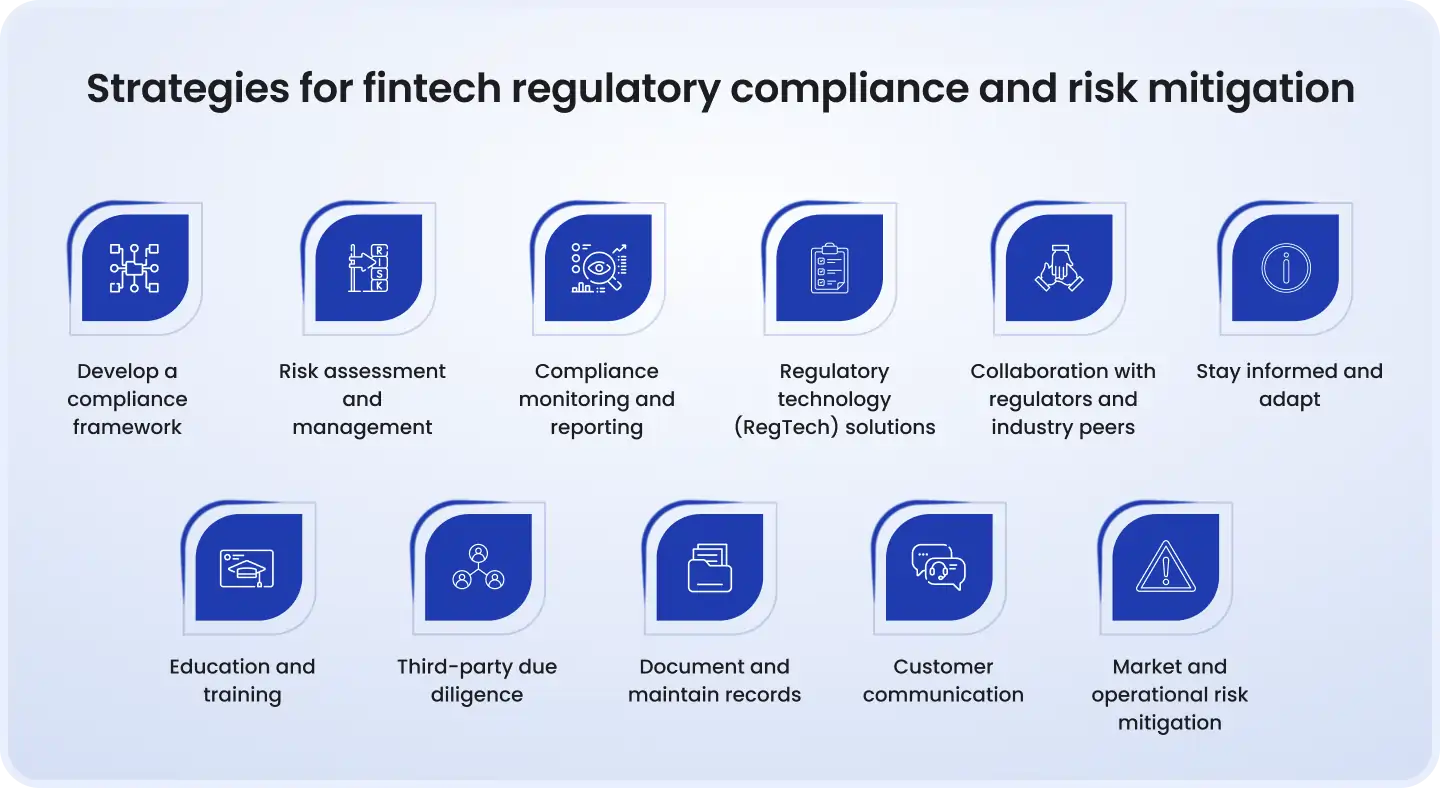

Compliance testing requires four groups: QA, security, legal/compliance, and engineering. Their roles are different and complementary. The mistake is treating them as interchangeable or assuming that any one group can carry the full responsibility.

Test case library, creation and maintenance

Owns

Contributes

Contributes

Informs

CI/CD pipeline integration

Owns

Contributes

Informs

Contributes

SAST / DAST tooling configuration

Contributes

Owns

Informs

Contributes

Penetration testing scope and execution

Contributes

Owns

Informs

Supports

Regulatory monitoring and change tracking

Informs

Informs

Owns

Informs

Translating regulation changes into test cases

Contributes

Contributes

Owns

Informs

Technical control implementation

Informs

Contributes

Informs

Owns

Finding remediation within defined SLAs

Contributes

Contributes

Informs

Owns

Audit evidence package preparation

Owns

Contributes

Reviews

Contributes

The sprint integration model that actually works

At the start of every sprint that introduces new data processing, new authentication flows, new payment features, or new third-party integrations, QA and compliance run a five-minute regulatory implications review:

What new personal data does this feature collect or process?

Is the lawful basis documented for any new processing?

Does this touch cardholder data, authentication, or a regulated payment flow?

Are there automated decision-making elements that trigger Article 22 obligations?

Does this create any new third-party data flows that require a DPA or transfer mechanism?

If the answer to any of these is 'yes' or 'maybe,' new compliance test cases are written before the feature ships, not as a post-launch audit. The cost of writing a test case in the sprint is trivial compared to the cost of retrofitting compliance controls after deployment.

The severity matrix: When does a compliance failure block a release?

Not every compliance test failure should block a release. A low-severity finding in a non-production environment is a different situation from a PCI DSS control failure in the cardholder data environment. Define the severity matrix explicitly and make it enforceable:

Critical: failure of a control mandated by PCI DSS, DORA, or GDPR with direct financial or data subject risk. Blocks release immediately.

High: failure of a documented compliance control that creates regulatory exposure but not immediate data subject risk. Requires risk acceptance from the CISO or DPO before release proceeds.

Medium: process or documentation gap without immediate technical exposure. Enters the compliance backlog with a defined remediation timeline.

Low: informational finding with no immediate exposure. Logged and reviewed in the quarterly compliance review cycle.

Step 5: Build documentation that survives an audit, not just tests that pass

Passing tests is not the same as having audit evidence. A CI/CD log that shows a test passed tells you the test passed. It does not tell an auditor what was tested, under what conditions, with what data, against which regulatory requirement, by whom, and with what result.

The gap between these two things, test results and audit evidence, is where most compliance frameworks are weakest. And it's exactly where regulators and external auditors focus.

The four documents every compliance framework must maintain

Traceability matrix, the single most important document in your compliance testing program. Maps every regulatory requirement in scope to the test case(s) that validate it, the last execution date, the result, and the remediation record for any finding. An auditor who can follow a line from 'PCI DSS Requirement 8.3, MFA for all access to the CDE' to a specific test, to a specific execution record, to a specific finding that was remediated and verified, that auditor is satisfied. One who can't is not.

Test execution records, timestamped, role-identified records of who ran which test, when, against which environment, with which test data, and what the result was. Not just a CI log. A structured record that a non-engineer can read and interpret.

Finding and remediation log, every compliance test failure creates a finding record. Every finding record has a status (open, in remediation, closed), an assigned owner, a target resolution date, and a verification record when closed. Open findings without resolution dates are audit red flags.

Regulatory change log, tracks changes to applicable regulations, the date the change was identified, the test cases affected, and the date those test cases were updated. This is the evidence that your framework is actively maintained, not static.

Case study: European neobank, from manual evidence assembly to audit-ready in 90 days

Challenge: A European neobank with operations in four EU markets was preparing for its first PCI DSS assessment under v4.0 and a concurrent GDPR audit following a data subject complaint. The compliance team had been maintaining separate spreadsheets for each framework. Producing audit evidence required 8 to 12 days of cross-team coordination, and the first attempt produced four audit findings related to missing traceability, not compliance gaps, but documentation gaps.

Solution: A structured compliance testing framework was implemented over 90 days: a unified regulatory obligation register mapping PCI DSS v4.0, GDPR, DORA, and PSD2 into a single traceability matrix; test cases reorganized by regulatory clause; a finding and remediation log replacing the previous ad hoc tracking; and automated SAST, DAST, and data handling checks integrated into the CI/CD pipeline.

Result: Audit evidence package preparation time reduced from 10 days to under 4 hours. The PCI DSS assessment produced zero documentation findings, a first for the organization. The GDPR audit was completed with one medium-severity finding (a consent logging gap that was already identified and remediated before the audit began). The cross-framework mapping identified 14 test cases that previously ran separately under PCI DSS and GDPR but were validating identical controls, consolidation reduced the total compliance test suite maintenance burden by 23%.

Step 6: Build a regulatory change management process, your framework must evolve

A compliance testing framework built for the 2023 regulatory landscape is not a 2025 compliance testing framework. PCI DSS v4.0 added 50+ new requirements with a March 2025 enforcement deadline. DORA entered full force in January 2025. The UK Data Use and Access Act 2025 added new obligations for UK data operations in June 2025. The EU AI Act is now creating testing requirements for AI-driven financial decisions.

The organizations that handle this well don't scramble when regulations change. They have a process that absorbs change as a routine operational task.

What a regulatory change management process looks like

A designated owner monitors regulatory updates, either in-house compliance counsel or a subscribed regulatory monitoring service. This is not a shared responsibility. One person or team receives updates; they are responsible for the initial assessment.

A structured impact assessment runs for every significant change, which products are affected, which existing test cases need updating, which new test cases need creating, and what the enforcement timeline is. This assessment produces a change ticket that enters the QA backlog with a priority tied to the regulatory deadline.

Updated test cases are reviewed by both QA and compliance before being added to the framework. QA owns the technical testability; compliance owns the regulatory accuracy. Neither review alone is sufficient.

The regulatory change log is updated, the date of the change, what changed, which test cases were affected, and when the updates were completed. This is the evidence that your framework is actively maintained.

The multi-framework efficiency principle in practice

When a new regulation enters your scope, the first question is not 'what new tests do we need?' It's 'what existing tests already partially or fully cover these requirements?'

A fintech that implements DORA operational resilience testing after already having PCI DSS incident response tests and GDPR breach notification tests will find significant existing coverage. The new DORA requirements add specific evidence formats and reporting obligations, but the underlying controls and tests may already exist. Documenting this mapping means new regulations create incremental work, not complete rebuilds.

The cadence that works: quarterly regulatory review as a standing meeting between QA, security, and compliance leads. Immediate assessment triggered by any significant regulatory announcement affecting your product category. Annual framework audit to assess overall coverage, identify gaps that have accumulated, and review the full test case library for accuracy.

What a compliance testing framework looks like in practice: before and after

Here's a direct comparison of compliance testing with and without a structured framework. If more than three rows on the left look familiar, you have a coverage or documentation gap that's likely to surface in the next audit or regulatory review.

When compliance testing runs

Pre-release, manually triggered under deadline pressure

Every build (automated) + scheduled deep-run cycles

How test cases are organized

By feature or sprint, tied to code, not regulation

By regulatory obligation with full traceability to the rule

Audit preparation time

Days of manual evidence assembly across multiple teams

Hours, from a maintained traceability matrix and finding log

Regulatory change response

Reactive, often discovered during or after an audit finding

Proactive, tracked, assessed, and integrated on a defined timeline

Ownership

Shared ambiguously across QA, security, and legal

Defined RACI with specific owners per regulatory domain

Finding management

Informal, tracked inconsistently in spreadsheets or Jira

Structured log with resolution tracking and verification records

CI/CD integration

None, or a single security scanner added late

SAST, DAST, SCA, and policy gates embedded in the build pipeline

The 5 most common mistakes when building a fintech compliance testing framework

These aren't theoretical warnings. They're the patterns that appear consistently when compliance testing programs are audited, from early-stage fintechs to established financial institutions.

Mistake 1: Treating compliance testing as a separate track from functional testing

When compliance test cases live in a separate repository, maintained by a different team on a different schedule, gaps appear at every boundary. A developer who changes an authentication flow updates the functional tests. No one updates the compliance tests, because they don't know they exist, or don't know they're affected.

The fix: compliance testing is functional testing with additional regulatory requirements. It belongs in the same pipeline, the same sprint, and the same backlog.

Mistake 2: Mapping tests to features instead of regulations

When a feature is deprecated, the test goes with it. When a regulation changes, you can't identify which tests need updating because there's no traceability from test to rule.

The fix: every test case maps to a regulatory clause. Features change; regulations persist. Let the regulations anchor your test library.

Mistake 3: Automating everything and testing nothing manually

Automated scans catch known vulnerability patterns and configuration errors. They don't catch business logic flaws, social engineering exposure, or the kind of architectural compliance gap that a QA engineer with regulatory literacy will spot in a code review.

The fix: build an explicit split between what belongs in the automated pipeline and what requires human judgment. Both are required. The automated layer is not a replacement for the specialist layer.

Mistake 4: Producing test results but not audit evidence

A CI/CD log showing a test passed is not evidence an auditor can use. Evidence requires context: what was tested, under what conditions, against which regulatory requirement, with what result, and what happened when something failed.

The fix: define the evidence format before you write the tests. Build test execution records and a traceability matrix from day one, retrofitting documentation after the fact is far more expensive.

Mistake 5: Building the framework once and not maintaining it

A compliance framework that was accurate when it was built, but hasn't been updated since the last regulatory change, is a liability. It creates confidence in coverage that doesn't exist.

The fix: assign a framework owner. Schedule the quarterly regulatory review. Treat the framework as a product with a maintenance backlog, not a project with a completion date.

Book your QA strategy call

Building your compliance testing framework: Where to start

The six steps above are sequenced deliberately. You can't integrate compliance tests into CI/CD until you know which tests to build. You can't build the right tests until you've mapped your obligations. You can't maintain the framework without defined ownership. Each step is a prerequisite for the next.

If you're starting from scratch, begin with Step 1, the regulatory obligation register. Three hours of cross-functional work to map your frameworks, identify overlaps, and assign owners will shape every testing and documentation decision that follows.

If you have an existing compliance testing program, start with a gap assessment against the framework architecture above. The most common gaps are: missing traceability between test cases and regulatory requirements, no regulatory change management process, and compliance test documentation that isn't audit-ready.

The regulatory environment isn't simplifying. DORA is in force. PCI DSS v4.0 is enforced. The EU AI Act is entering fintech scope. Building a structured compliance testing framework now, with the architecture above as the foundation, means each new regulation enters a system designed to absorb it. The alternative is a different set of emergency sprints for each regulatory deadline, indefinitely.

Want to audit your current compliance testing coverage or build a framework from the ground up? DeviQA's QA team works with fintech companies at every stage of compliance framework maturity, from initial regulatory mapping to full CI/CD integration and audit-ready documentation. Get in touch to discuss where you are and where you need to be.

Your dev team need a solid QA partner

About the author

Senior AQA engineer

Ievgen Ievdokymov is a Senior AQA Engineer at DeviQA, focused on building efficient, scalable testing processes for modern software products.