Written by: Senior AQA Engineer

Ievgen IevdokymovPosted: 15.05.2026

17 min read

When a shopping app crashes, users get frustrated. When a payment app fails mid-transfer, users lose money, and companies lose something harder to recover: trust.

That distinction is why test automation strategy in fintech isn't a standard engineering conversation. It's a risk management conversation dressed in QA terminology.

Most fintech QA teams fall into one of two traps. The first: automate everything in sight, then spend half the sprint maintaining brittle UI tests that break whenever a button moves. The second: lean on manual testing too heavily, only to discover regression failures after a release has already gone live.

Neither approach is sustainable. And neither reflects how high-performing fintech engineering teams actually operate.

Test automation strategy for fintech: a practical framework for automated testing for fintech apps, what to automate, what to keep manual, and how to balance both to reduce risk, cut flakiness, and ship faster.

Why fintech application testing is a different beast

The stakes are categorically higher

In most software, a bug means poor user experience. In fintech, a bug means a failed payment, an incorrect balance, a compliance violation, or a data breach. The downstream consequences aren't measured in support tickets, they're measured in regulatory fines, customer churn, and reputational damage that compounds over years.

Even minor failures carry outsized weight. A rounding error in an interest calculation might affect thousands of accounts simultaneously. A failed authentication flow during peak hours can lock out users at exactly the moment they need access. A payment gateway that misroutes a transaction, even once, erodes the trust that takes months of onboarding and marketing to build.

Three forces that make fintech QA uniquely complex

Velocity of change. Fintech teams ship frequently, often weekly or bi-weekly. Every release touches interconnected systems: payment flows, risk engines, compliance rules, third-party integrations. Testing everything manually after every deployment isn't just slow, it's structurally impossible at scale.

Regulatory pressure. KYC, AML, PCI-DSS, GDPR, SOC 2, the compliance landscape is dense, jurisdiction-specific, and constantly updated. A regulation that changes in one market can require re-validation of flows that were considered stable. Testing for compliance isn't a one-time checklist; it's an ongoing obligation.

Integration complexity. Modern fintech applications don't live in isolation. They sit at the intersection of banking APIs, payment rails, identity verification services, credit bureaus, fraud detection systems, and mobile platforms. A failure at any integration point creates cascading problems that look, at first, like application bugs.

The cost of getting the balance wrong

Over-automation creates false confidence. A test suite with 1,200 automated cases feels comprehensive. But if 300 of them are flaky UI tests that fail intermittently regardless of code quality, engineers learn to ignore failures. The signal degrades. Real bugs start slipping through.

Under-automation creates velocity ceilings. A team running 600 manual regression cases per sprint before each release cannot ship weekly. They'll either slow down or start cutting coverage, both of which increase risk.

Pure automation has a blind spot: even in automated testing for fintech apps, tests only catch what they're explicitly designed to detect. Edge cases, unexpected user paths, and untested interactions don't disappear, they accumulate in the gaps between scripts. That's why manual testing isn't something to eliminate. It's the layer that uncovers what automation can't anticipate.

Book your QA strategy call

The automation decision framework: Your QA compass

Before choosing tools or writing a single test script, every fintech QA team needs a consistent logic for making automation decisions. The criteria below aren't exhaustive, but they cover the vast majority of real-world scenarios.

Automate when:

The test is executed repeatedly across every build or deployment cycle

The logic is deterministic, same inputs should always produce the same outputs

Speed and parallel coverage matter more than human interpretation

Human error in this area carries significant financial or compliance risk

The test scenario is stable and unlikely to require frequent rework

Keep manual when:

The scenario requires judgment, intuition, or interpretation of nuance

The UI or workflow is actively changing and specifications aren't final

Regulatory interpretation is ambiguous or subject to legal review

The test simulates complex real-world user behavior across multi-step flows

The feature is new and not yet worth investing automation time in

The practical shorthand: focus automation on repetitive, high-priority test cases, regression, API, and performance testing, to maximize ROI. Everything else deserves a deliberate case-by-case judgment.

Pro tip: Treat automated testing for fintech apps as a force multiplier, not a replacement. The goal is to free your QA engineers to focus on the work only humans can do, exploration, judgment, and edge-case discovery.

What to automate in fintech: The high-ROI targets

Regression testing

If there's one automation investment every fintech team should prioritize, it's regression. Financial applications carry significant technical debt in the form of interdependencies, a change to a payment routing rule can silently break a balance calculation. A UI update can affect an API response format. A third-party SDK upgrade can alter token refresh behavior.

Running regression after every deployment, and blocking deployment on failure, is the single highest-leverage automation practice available. Teams that do this consistently catch the overwhelming majority of integration regressions before they reach users.

The key discipline: run regression tests after every deployment and update test cases as features evolve. Static regression suites become liabilities. A test written 18 months ago that validates a deprecated flow wastes execution time and creates false coverage metrics.

Integrate regression into your CI/CD pipeline so results are visible within minutes of a commit. Developers who get immediate feedback fix issues in context, before switching tasks, before the next standup, before the next sprint.

API testing

The API layer is the nervous system of fintech architecture. Payment processing, account verification, fraud checks, KYC workflows, identity lookups, virtually every critical fintech function lives in an API call. Automating this layer delivers exceptional return because it's fast, stable, and directly tests business logic without UI fragility.

A well-automated API test suite validates:

Correct responses across happy paths and error conditions

Proper handling of edge-case inputs (invalid amounts, unsupported currencies, expired tokens)

Latency within acceptable thresholds under normal load

Authentication and authorization behavior (what happens with a revoked token? An unauthorized scope?)

Contract stability, when a third-party provider updates their API, does your integration still behave correctly?

The sequencing matters: build strong API automation first, then add UI tests only for critical user journeys. This approach catches 80% of defects at a fraction of the maintenance cost of UI-first test suites.

Realistic example: A digital lending platform integrates with three credit bureau APIs. Automated contract tests run on every commit, validating that response schemas haven't changed and that the application handles each bureau's error codes correctly. When one bureau quietly deprecates a field in a sandbox release, the automated suite catches it before the change reaches production, saving a potential data mapping failure across thousands of loan applications.

Performance and load testing

Fintech applications face demand patterns unlike most software. Tax season, market volatility, promotions, and payment deadlines can drive transaction spikes up to 10x normal load. Manual approaches simply can't replicate that scale, which is why automated testing for fintech apps, particularly performance and load testing, is essential to simulate real-world stress and validate system behavior under pressure.

Automated performance testing validates:

Throughput under sustained load (transactions per second)

Latency degradation as concurrent users increase

System behavior at breaking points (what happens when the database connection pool is exhausted?)

Recovery time after a spike, how quickly does the system return to baseline?

Major payment platforms process tens of thousands of transactions per minute. Any manual approach to performance validation at that scale is theater, not testing.

Security scanning (automated layer)

Automated security scanning isn't a substitute for penetration testing, but it's an essential complement. Static analysis tools and dynamic scanners can identify known vulnerability patterns (SQL injection vectors, insecure headers, hardcoded credentials, outdated dependencies with known CVEs) at a speed and scale no manual team can match.

Run security scans on every commit. This creates a consistent baseline and prevents broken or vulnerable builds from reaching QA or production environments. In regulated fintech environments, this also creates an audit trail that demonstrates continuous security posture monitoring, increasingly important for compliance certifications.

The key limitation to communicate clearly: automated scanners find what they're trained to find. They don't think. They don't chain vulnerabilities creatively. That's why they belong to a layer, not a replacement for the work described in the manual section.

Smoke and sanity tests

Fast, lightweight, and non-negotiable. Smoke tests answer a simple question: does the core system still function after this deployment?

Can a user log in? Can a payment be initiated? Does the dashboard render? Can a KYC document be submitted?

A well-designed smoke suite runs in under 10 minutes and gates every deployment. If login breaks, nothing else matters. Catching that in 8 minutes rather than after a live deployment prevents the worst-case scenario: real users encountering critical failures in production.

Data validation and financial calculation logic

Financial calculations must be exact. Interest rates, FX conversions, fee calculations, tax withholding, loan amortization schedules, all of these require precision that human testers simply cannot validate at volume.

Automated data validation tests run thousands of combinations of inputs against expected outputs, catching edge cases that manual testing would never surface: what happens when a fee calculation rounds differently in one jurisdiction? What does the system return when a currency conversion rate is unavailable? What's the behavior when a loan calculation reaches the final decimal?

This is one area where the ROI of automated testing for fintech apps is almost instant, a one-time investment in parameterized calculation tests provides ongoing coverage across every release.

Consistent test automation for reliable software releases

What to keep manual in fintech: where humans still win

Exploratory testing

Automated tests execute defined scenarios reliably. They don't discover unexpected behavior in undefined scenarios. Exploratory testing is precisely that, skilled testers using domain knowledge, curiosity, and pattern recognition to find failures that no one thought to write a test for.

In fintech, this matters enormously. Business logic is complex, often underdocumented, and frequently misunderstood even by developers who wrote it. An experienced tester navigating a multi-currency transfer flow without a script might discover that an edge case in fee rounding produces incorrect displayed amounts, something no regression test covers because the scenario was never specified.

Dedicate specific testing cycles, not ad hoc time at the end of a sprint, to structured exploratory sessions. Define a charter (the area and goal, not the steps), time-box the session, and document findings rigorously. This isn't manual testing by default. It's manual testing by design.

Usability and UX testing

Can a first-time user complete a KYC onboarding flow without support intervention? Does the mobile app's transaction confirmation screen communicate enough information for someone making a high-stakes transfer? Is the error message for a failed payment clear enough to prevent a support call?

Automated tools can check that a button exists and is clickable. They cannot assess whether the experience is trustworthy, clear, or frustration-free for real users. Usability testing gathers feedback on how real customers interact with the interface, and in fintech, where user confidence is directly tied to business performance, that feedback is strategically important.

This extends to accessibility. Financial exclusion is a genuine social problem, and many regulatory frameworks now mandate accessibility compliance. Screen reader behavior, keyboard navigation, color contrast for users with visual impairments, these require human evaluation against real assistive technology, not just automated accessibility checkers.

Regulatory and compliance interpretation

Automated tools can verify that a required disclosure is displayed. They cannot determine whether the language used is legally sufficient in a new market, or whether a new AML directive changes the threshold logic in your transaction monitoring system in ways your existing test cases don't cover.

New regulatory requirements, whether from the FCA, FinCEN, EBA, or emerging frameworks like the EU AI Act's implications for algorithmic credit scoring, require legal, compliance, and QA professionals working together. A manual compliance walkthrough before entering a new market isn't a cost center. It's the cost of operating in that market legally.

Schedule dedicated compliance review sessions before major product launches and whenever the regulatory environment in key markets changes. These sessions should include compliance officers and legal counsel, not just QA engineers.

User acceptance testing (UAT)

UAT is the final validation that the product does what the business promised, not just what the specifications said. Business analysts, product owners, compliance officers, and key stakeholders must participate. Their domain knowledge surfaces requirements gaps that technical test cases don't reach.

A payment operations team running UAT on a new bulk disbursement feature might identify that the batch size limit, though technically functioning correctly, doesn't match the operational reality of how their largest enterprise clients actually submit payments. No automated test would surface that, because it's not a technical defect. It's a requirements misalignment that only users with business context can identify.

Penetration testing

Automated security scanners find known vulnerabilities. Penetration testers find the unknown ones. Ethical hackers think creatively, they chain vulnerabilities, exploit business logic, test social engineering attack surfaces, and simulate adversarial behavior that scripted tools never anticipate.

Fintech applications are high-value targets. A mobile banking application with a subtle business logic flaw in its transfer authorization flow is an attractive target precisely because the flaw looks correct to automated scanners. It takes a human attacker, or a skilled penetration tester, to find it.

Schedule full penetration tests at minimum quarterly, and always before a major product launch or significant architecture change. Treat pen test findings as architectural feedback, not just bug reports.

Building the hybrid strategy: Making automation and manual work together

The most effective strategies for automated testing for fintech apps aren't defined by a tool choice or a framework name. They're defined by a layered architecture where each testing type occupies the right position.

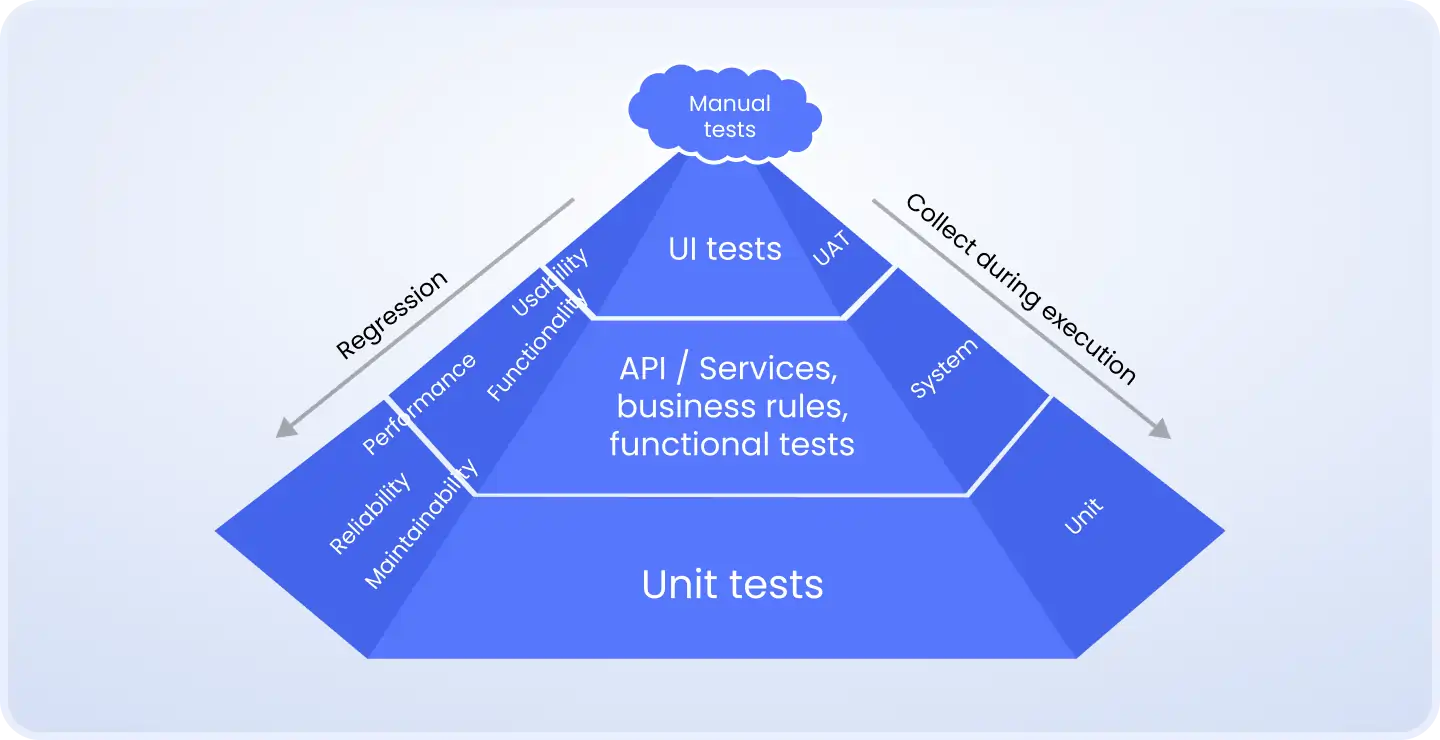

The test pyramid applied to fintech

The classic test pyramid translates well to financial applications, with one important modification: the compliance and security layers sit above the pyramid entirely and require both automated and manual coverage.

Unit tests (bottom layer): Fast, isolated, developer-owned. Validate individual functions, calculation logic, data transformations, input sanitization. Thousands of tests, millisecond execution. This is where business logic bugs should be caught.

API/integration tests (middle layer): Validate how components interact, services talking to each other, third-party integrations, database read/write operations. Slower than unit tests but far more stable than UI tests. This layer should be comprehensive.

UI/E2E tests (top layer): Selective automation for critical user journeys only (onboarding, payment submission, account verification). Manual testing for new features, edge cases, and anything with rapidly changing specifications.

Above the pyramid: Exploratory testing, UAT, compliance reviews, and penetration testing, all manual, all essential.

How to sequence your automation investment

For teams earlier in their automation maturity:

Start with your highest-frequency regression scenarios, the 20–30 flows that are tested after every single deployment

Automate your API layer comprehensively before building UI tests

Add smoke tests that gate every deployment in CI/CD

Layer in performance testing before your next anticipated traffic spike

Add calculation/data validation tests for any financial logic updated in the last 6 months

For teams with an existing automation suite:

Audit flaky test: any test that fails intermittently without a code change is creating noise, not signal. Delete or fix it.

Review coverage against current business-critical flows: legacy suites often test deprecated functionality while missing new critical paths

Evaluate whether your automation is testing at the right layer: UI tests that could be API tests are a maintenance liability

The cultural investment

Automation strategy is a technical problem with a people dimension. Building, maintaining, and evolving a test automation suite requires engineering skill that not every QA team has by default.

Ensure your team has the expertise to build, execute, and maintain automation frameworks effectively, training or hiring specialists may be necessary. A poorly maintained automation suite is worse than no suite at all: it creates false confidence while consuming engineering time.

Measuring whether your strategy is working

A test automation strategy that isn't measured is a strategy in name only. Track these metrics consistently across quarters:

Automation coverage %: What proportion of your regression suite is automated? Below 60% for a mature fintech product indicates meaningful risk. Above 90% without a thoughtful manual complement may indicate over-automation.

Defect escape rate: How many bugs reach production that automated testing should have caught? This is the most important lagging indicator of strategy effectiveness. A declining escape rate confirms your coverage is improving.

Mean time to detect (MTTD): How quickly after a commit does the automated suite surface a failure? Anything over 30 minutes significantly reduces the value of CI/CD integration.

Test execution time: Is your suite getting faster or slower over time? A suite that grows without pruning will eventually become too slow to run on every commit, at which point teams start running it less frequently.

False positive rate: What percentage of test failures require investigation before determining they're not real bugs? High false positive rates erode team trust in the suite, which leads to ignored failures, which leads to production incidents.

If these numbers improve quarter over quarter, your strategy is working. If they're stagnant or degrading, your automation is accumulating debt, not building value.

Case study: Rebuilding a brittle test suite at a payments platform

Challenge: A mid-sized B2B payments platform had 800+ automated UI tests covering their core payment flows. The suite took 4.5 hours to run, had a 35% false positive rate, and blocked deployments daily due to environment flakiness rather than actual bugs. QA engineers spent more time investigating false positives than finding real defects.

Solution: Our QA team undertook a 6-week restructuring. We deleted 340 UI tests that duplicated functionality already covered at the API layer. We rebuilt the API test suite from scratch using a contract-testing approach, covering 95% of integration points. We retained UI automation only for the 12 highest-frequency user journeys. We added parameterized calculation tests covering 1,800+ financial edge cases. The rebuilt suite ran in 22 minutes.

Result: The false positive rate dropped from 35% to under 4%. The defect escape rate to production dropped by 60% over the following quarter. Deployment frequency increased from twice weekly to daily. QA engineers redirected their time toward exploratory testing of new features, and found 23 critical defects in the first two months that the old automated suite would never have surfaced.

Conclusion: Trust is built one test at a time

The right automation strategy for fintech isn't about tooling. It's about judgment, knowing which risks can be managed by deterministic scripts running at machine speed, and which risks still require a human brain operating with domain knowledge, curiosity, and regulatory awareness.

Automation is your force multiplier. Manual testing is your intelligence layer. Together, with the right sequencing and the right metrics, they form a QA strategy that doesn't just catch bugs, it builds the kind of reliability that compounds into competitive advantage.

Trust is not built in a single release. It's earned through every transaction, every login, and every new feature that performs exactly as expected, every single time.

Your testing strategy is either building that trust or quietly eroding it. Now you have the framework to make sure it's doing the former.

Next steps:

Audit your current automation suite against the decision framework in Section 3.

Identify your top 5 critical user journeys and verify they have both automated regression coverage and regular manual exploratory sessions.

Measure your defect escape rate over the last 3 months, if you don't have that number, that's the first gap to close.

Not sure where your current automation strategy has gaps? The QA specialists at DeviQA have helped fintech teams restructure brittle test suites, build API-first automation frameworks, and reduce defect escape rates, without a full team rebuild. Talk to us.

Book a strategic QA consultation

About the author

Senior AQA engineer

Ievgen Ievdokymov is a Senior AQA Engineer at DeviQA, focused on building efficient, scalable testing processes for modern software products.