Written by: Chief Technology Officer

Dmitry ReznikPosted: 02.04.2026

16 min read

Once you’ve got there, here is a quick primer on what likely interests you.

The Digital Operational Resilience Act is a core North Star for the financial entities in the European Union. For over a year, this regulation has been controlling the adherence to the technical and operational standards in the industry.

In 2026, most fintech teams have two relatively new challenges:

1. Documents → real actions (read, software tests): It all begins with policies (almost all). But new laws demand something more — they want you to ensure test logs, the results of regular disaster recovery exercises, and failover metrics. Without this evidence, passing a regulatory audit becomes extremely difficult. Penalties vary depending on the severity of non-compliance.

2. Independent third-party vendors → comprehensive partnerships: DORA law makes financial companies responsible for the uptime and security of their vendors. Your external vendor made a mistake in their security protocols? Your business can get cascading system failures and regulatory fines due to your partner’s misstep.

Moreover, fragmented execution is visible since supervisory authorities, ESAs, and national regulators are coordinating oversight.

In this blog, we explain what the DORA regulation is and turn its outputs into actionable engineering and quality outtakes.

Let’s start with the most logical step — the DORA regulation summary.

What the DORA regulation is (and what it is not)

Before the DORA (Digital Operational Resilience Act), European financial entities had to figure out ICT risk expectations across different member states. Regulatory demands hugely varied by country, and cross-border operations were difficult to manage (to audit as well, though).

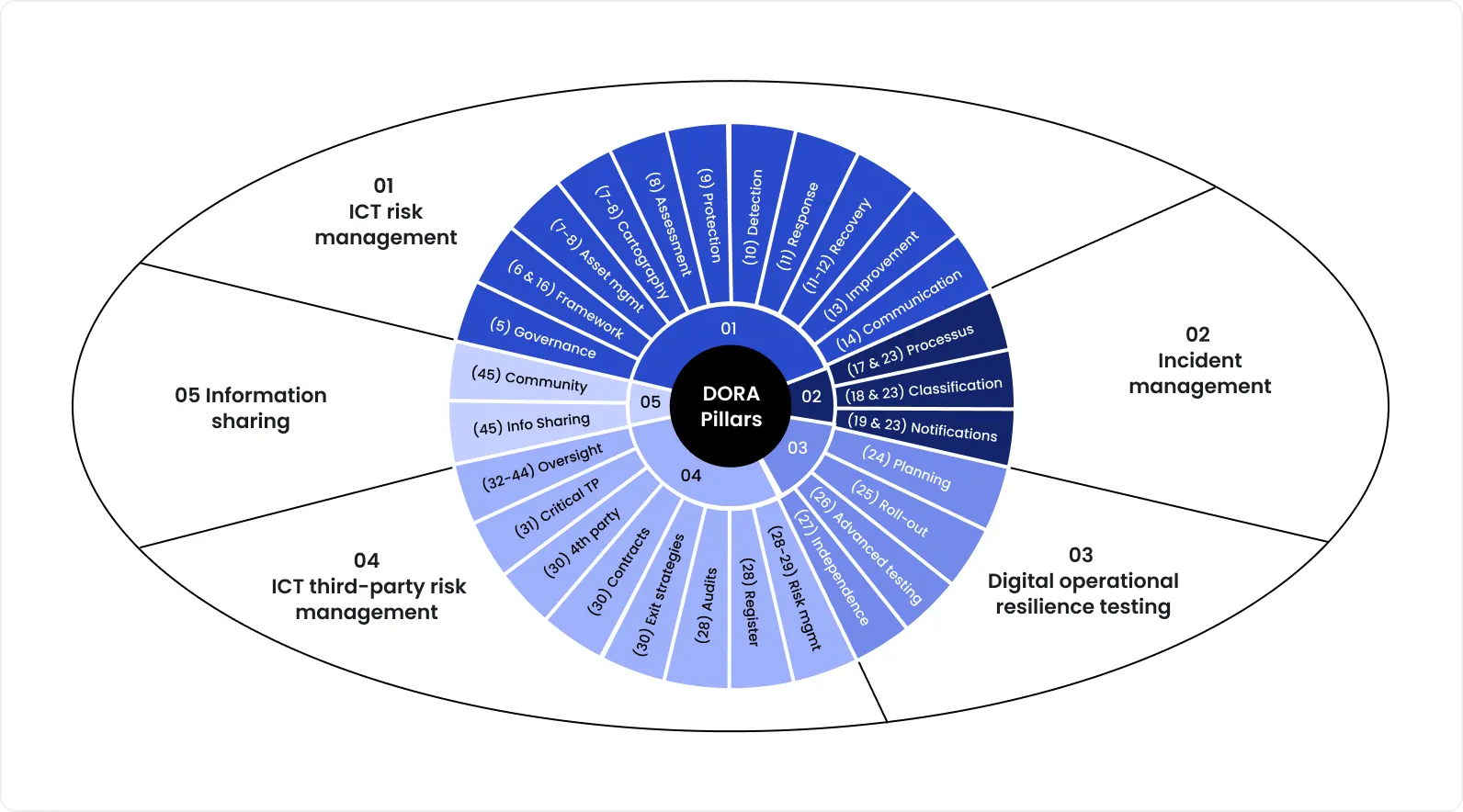

That regulation 2022/2554 covers five connected pillars:

ICT risk management

ICT-related incident reporting

Digital operational resilience testing

ICT third-party risk management

Information-sharing arrangements

Unfortunately, many sources still describe the Act as a cybersecurity regulation. Not precise and may lead to failed audits. To be more precise, the Act demands:

Governance and control: Leadership boards are accountable for ICT risk management and execution.

Business continuity planning: You need tested failover strategies backed by data.

Resilience testing: Threat-led penetration testing (TLPT) and automated operational drills are now mandatory.

Third-party vendor oversight: As a financial project, you remain fully accountable for risks introduced by your ICT third-party providers.

Who must comply

The full scope is detailed in Article 2 of the Regulation. Critical ICT third-party service providers (CTPPs), cloud infrastructure providers, data analytics platforms, and SaaS vendors now face direct supervision from authorities (ESMA).

Here are the main recipients of DORA compliance:

credit institutions

payment institutions and electronic money institutions

investment firms

insurance and reinsurance undertakings

crypto-asset service providers (where applicable under EU financial regulation)

central counterparties, trading venues, and other market infrastructure entities

If your company builds software or provides IT services to a regulated European financial firm, you operate inside their supply chain. Chances are, your upcoming vendor contracts will include DORA requirements, annual audit cycles, and procurement requests.

If you don’t provide test execution logs, incident response metrics, or disaster recovery results, you are not going to get new deals, yet you may get the termination of existing partnerships.

The 5 pillars of DORA

As per PwC, 22,000+ financial establishments and IT service providers must comply with the regulation. Non-compliance brings about fines of up to EUR 5M or 3-12.5% of annual turnover for serious breaches. For comparison, the GDPR fine baseline is up to 4% or EUR 20M of annual turnover.

Any figures make compliance efforts feasible.

Pillar 1. ICT risk management

What DORA expects

Your go-to guidance here is Articles 5-16. In a nutshell, they cover governance for technology risks: maintain a precise asset inventory, change and release controls, backup and restore capabilities.

What teams must implement

A complete inventory of all internal and external information assets

Change management protocols to block unauthorized code deployments

Scheduled and automated backups

Continuous monitoring tools to detect system anomalies

What to test

Run a data recovery plan to check backup integrity and restoration speed

Trigger simulated system spikes to validate monitoring alerts

Review release pipelines to confirm that change controls function correctly

Evidence artifacts

An updated risk register

A complete asset inventory map

Access review logs

Restore test execution results

Pillar 2. ICT-related incident management and reporting

What DORA expects

The new approach defines how tech teams must classify and report ICT-related incidents: timelines, report content, and templates. Also, the reporting becomes direct to competent authorities, according to the technical standards adopted by the European Supervisory Authorities.

What teams must implement

Automated incident detection and response mechanisms

The workflow should include the following stages: detect → triage → classify → internal comms → regulatory reports → post-incident review

What to test

How fast can you detect and classify a simulated critical failure

If your automated report generation meets the required ITS templates

Whether your senior management gets alerts properly and immediately in case of an issue

Evidence artifacts

Incident classification logs

Completed regulatory reporting templates (using mock drill data or historical incidents)

Post-incident review documentation detailing root causes

Pillar 3. Digital operational resilience testing

What DORA expects

A testing structure based on a risk, criticality, and size profile: routine controls testing, scenario-based testing, and advanced Threat-Led Penetration Testing (TLPT). You can dive deeper into mandatory testing programmes in Articles 24-27.

What teams must implement

Automate routine QA and security regression tests in the CI/CD pipeline

Schedule scenario-based disaster recovery actions

Advanced checks; for example, TLPT for designated entities (you can use certified external red teams for that)

What to test

Measure how quickly systems recover and how well defenses hold up against mock attacks. Track every defect discovered during these tests and measure remediation speed.

Evidence artifacts

Logs detailing specific defects found during resilience tests

Remediation tracking boards showing ticket closures

Re-test proof confirming the identified vulnerability is securely closed

Pillar 4. Third-party ICT risk management

What DORA expects

Get on the same page with your vendors. In any sense: in cross-checking critical functions, enforcing specific contract clauses, in ongoing performance monitoring, and in crisis strategies. Learn more about vendor oversight in Articles 28-44.

What teams must implement

Determine the most critical business functions, tie them to your software, and grade them

Categorize the entire software supply chain based on that graduation

Include mandatory audit rights in all vendor contracts. Re-sign the existing ones if needed

Ensure exit strategies to migrate away from a failing vendor

What to test

Audit vendor incident response times through joint trainings

Simulate a critical vendor outage to test the technical feasibility of your exit plan

Validate vendor participation in your internal resilience tests

Evidence artifacts

SOC2 reports or equivalent vendor security certifications

Signed SLAs and incident notification agreements

Executed audit rights clauses within active vendor contracts

Proof of vendor participation in operational resilience testing

Pillar 5. Information sharing

What DORA expects

Your contribution to the healthy fintech industry by sharing cyber threat information and intelligence among other financial companies and institutions. When this info is standardized, it helps to ensure collective resilience across the sector.

What teams must implement

Secure channels to receive and share anonymized threat intelligence

External threat data feeds should be directly in internal security monitoring tools

What to test

Whether ingested threat feeds correctly trigger internal security alerts

Internal procedure for stripping personal data and anonymizing threat metrics

Evidence artifacts

Information-sharing policy

Membership documentation

Threat intelligence intake records

Action logs showing how shared intelligence improved controls

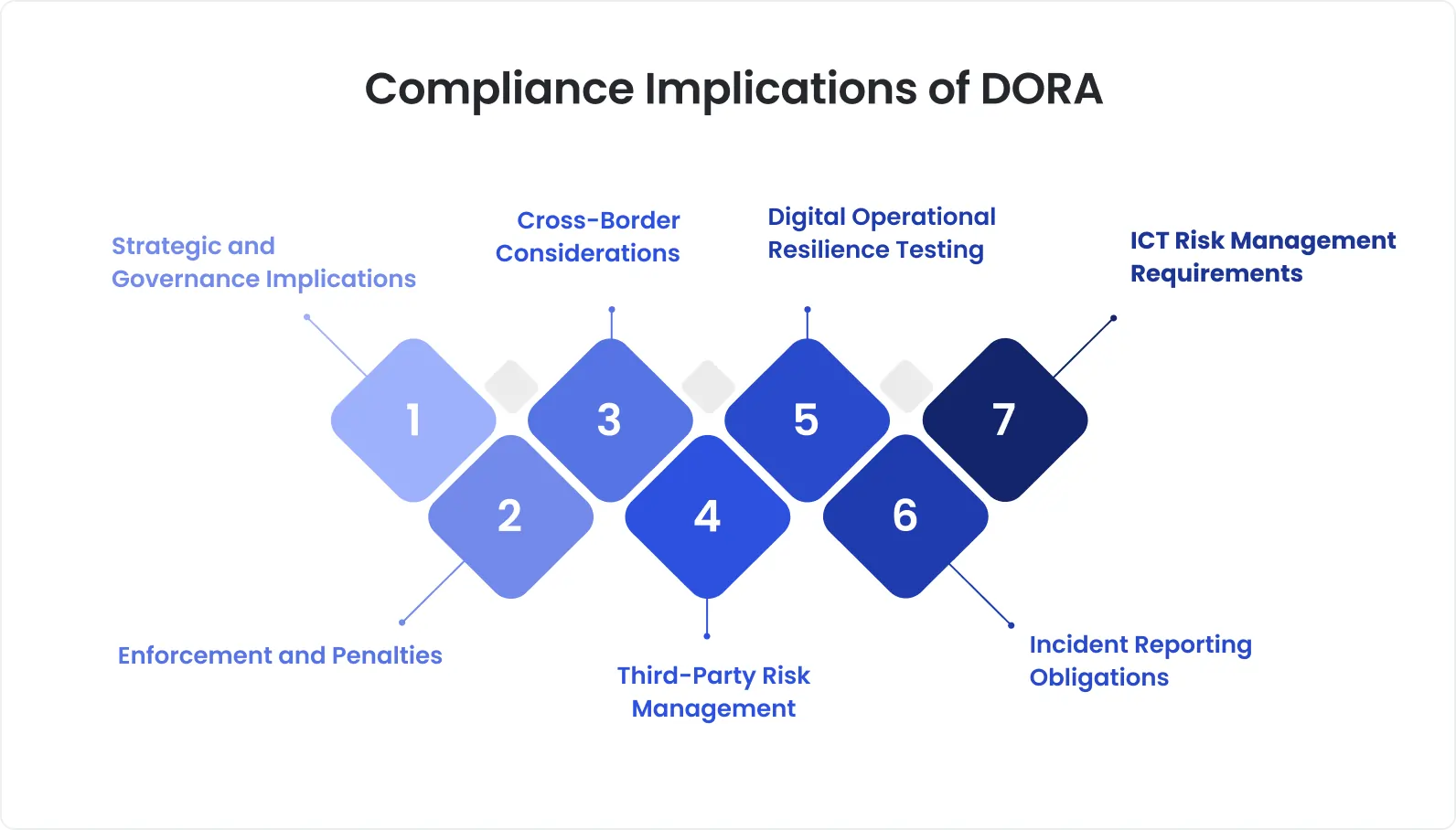

DORA “Level 1 vs Level 2”

Compliance with the regulation text isn’t enough (...if you want to complete the audit for sure). The Level 1 is a so-called DORA law; it contains core principles and obligations:

ICT risk management requirements

Incident reporting duties

Digital operational resilience testing

Third-party ICT risk management

Information-sharing conditions

Basically, it’s a summary of what must exist and who is accountable for that. But it’s not all what the DORA regulation is.

Level 2 discloses more — the Regulatory Technical Standards (RTS) and Implementing Technical Standards (ITS). These documents explain exactly how to execute the rules and what evidence auditors will request:

Incident classification thresholds and reporting templates

Timing requirements for initial, intermediate, and final reports

Criteria for threat-led penetration testing (TLPT)

Third-party oversight expectations

Level 1 tells you what, and Level 2 details how exactly. Let’s now break down the preparation roadmap.

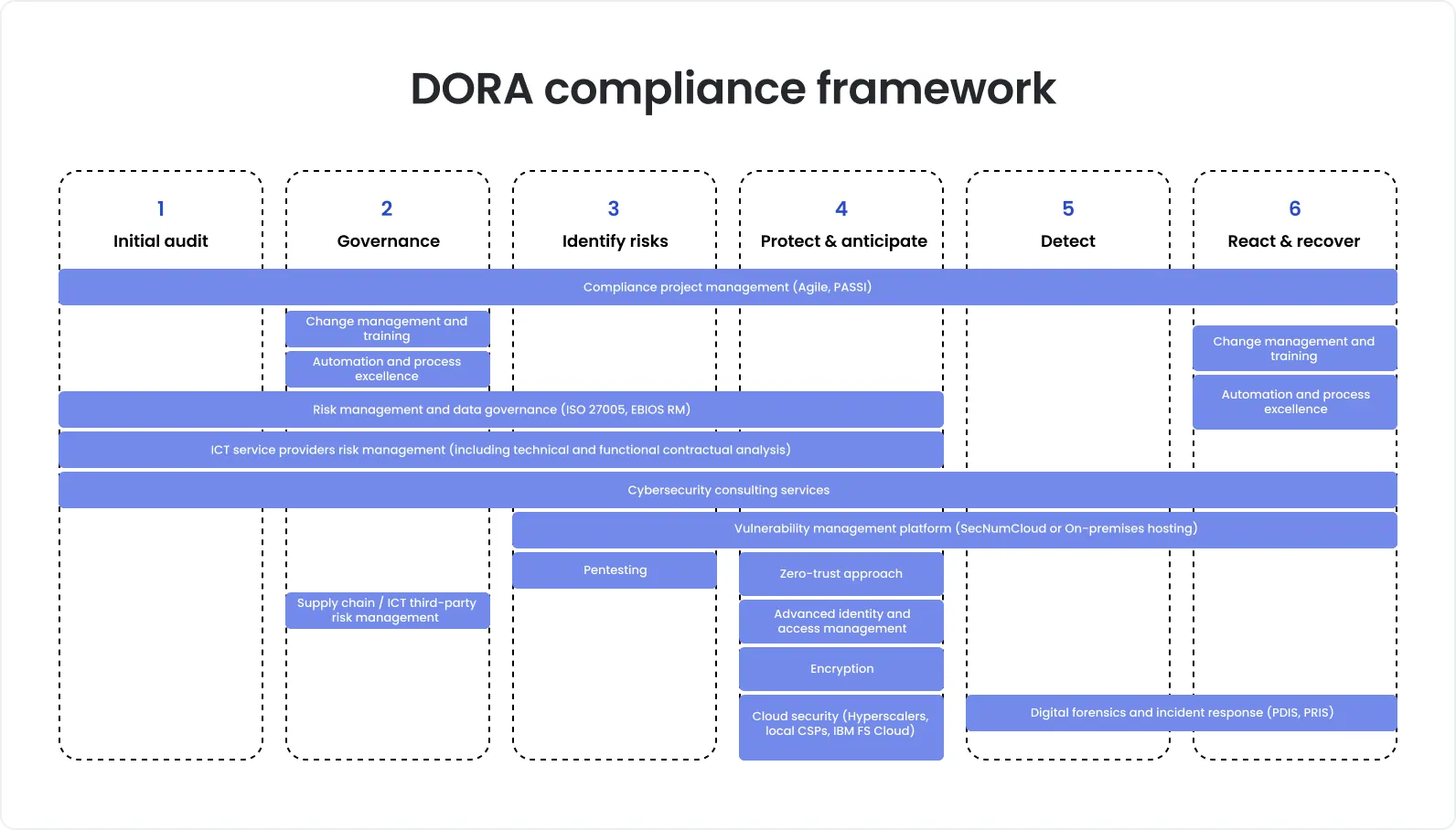

An implementation roadmap for the DORA European regulation

The entire preparation may take about 6 months to set up and continuous operational effort (or until the regulation loses its power). You can target time estimations below, but always remember your capacity + some buffer on the off chance.

Phase 1:Baseline and gap assessment (2 to 4 weeks)

Objective: To understand your current operational capacity and evidence maturity and compare them with regulatory standards.

What to do:

Identify critical functions

Internally audit systems, data flows, and dependencies for those functions

List ICT third-party providers linked to them (we’d suggest an internal database to ease tracking and management)

Review that across the 5 pillars

What you get as a result:

Critical services register

System and vendor inventory with ownership

Control maturity assessment

Evidence gap log

The common situation is when teams have controls, but they actually tested them once, at the very beginning.

Phase 2: Remediation plan and governance (1 to 3 months)

Objective: To understand who will be accountable for each next phase and who will lead your engineering backlog.

What to do:

Choose executive and operational owners per pillar

Align the incident classification matrix to RTS edges

Design a structured incident reporting playbook

Define an annual resilience testing program

Meet vendor contracts with DORA requirements

Ensure you have a board-level reporting format for ICT risk

What you get as a result:

Approved remediation roadmap

Incident reporting operating model

Testing calendar

Vendor classification matrix

Updated governance charter

Clarity at this stage allows 90% of the control later.

Phase 3: Make it a routine (3 to 6 months)

Objective: To implement all previous preparation in the day-to-day routine: code, environments, testing/reaction training, performance validation, etc.

What to do:

Start/improve change and access controls

Run backup restoration tests against defined RTO/RPO targets

Conduct resilience exercises. Target different crisis scenarios, not just the most possible ones

Conduct live operational resilience tests, including disaster recovery and network stress tests

Gather vendor monitoring dashboards (ensures clarity, visibility, and automated alerts in one place)

Ensure a repeatable pipeline for evidence collection so that you have auto-generation of test execution logs and incident response metrics

The focus:

Your ability to restore a critical service within the defined recovery objective

Whether you can create a complete incident report (at least, placeholders) within regulatory timelines

Whether you record vendor SLAs. How exactly, if yes

What you get as a result:

Control test reports

Drill results with remediation tracking

Vendor monitoring logs

Structured evidence repository

Documentation reflects tested reality and ensures proper audit.

Phase 4: Continuous testing and robust evidence (ongoingly)

Objective: Maintain everything you managed to achieve by this phase, review all of this constantly, and fine-tune if needed.

Typical Key Performance/Risk Indicators:

Backup restore success rate

RTO/RPO achievement rate

Incident mean time to detect (MTTD)

Incident mean time to resolve (MTTR)

Control pass rate in periodic testing

Percentage of critical vendors reviewed annually

Without exaggeration, supervisors can request information at any time. So, once again — focus on tested controls, traceable evidence, accountable owners, and board-level visibility.

Request a QA strategy session

Common failure points that teams underestimate

Probably, the most dangerous trap is a misperception of regulatory audits as paperwork. Rest assured, this is a 100% failure under the new rules. The most harmful misstep is clear, what else?

1. Policies exist only nominally. They don’t have proof

Your team wrote an excellent ICT risk policy, approved it with the board…and failed DORA compliance. Why? Because auditors don’t want texts, they want control software testing and proper evidence.

Possible pitfalls:

You update risk registers only before audits

You document change approvals in tickets, but don’t sample them

You conduct access reviews informally and don’t retain records

How to remediate:

Put the documented results into a quarterly control sampling

Link every policy logical section to a control and every control to evidence

Assign named control owners with a concrete review cadence

If you can’t show timestamped proof, supervisors would say you don’t have control.

2. You have backups, yet don’t test restores constantly

Many fintech teams have backups. Yet, one of the DORA requirements is predictable restoration performance. For this reason, you need a testing trace.

Possible pitfalls:

You defined RTO and RPO targets, but never measured them

Restore tests executed only in lower environments

No proof that dependencies (databases, authentication, integrations) restore consistently

How to remediate:

Schedule restore tests for critical services at least annually

Record start and end times to validate RTO

Document data integrity checks post-restore

Track failed restore attempts as risk items until resolved

3. Incident classification is unclear, so reporting clocks get missed

The DORA framework also defines specific, time-bound reporting under ESA technical standards.

Possible pitfalls:

The regulation has specific “major incident” criteria, so if you don’t map your severity matrices to them, it’s a “-1 point”

All classifications should also be documented

No dry runs to test regulatory reporting timelines

How to remediate:

Map business impact indicators directly to RTS thresholds

Pre-fill reporting templates for common incident types

Run simulation exercises

Assign the owners of the regulatory submission

4. Vendor contracts don’t match DORA expectations, and you don’t have exit tactics

One of the most difficult DORA compliance articles (28-44). Yet, if we break them down, they don’t seem frightening.

Possible pitfalls:

You didn’t include compliance requirements, periodical check-ins, and exit tactics in contracts with third-party vendors

Even if you got all exit ducks in a row, you might not tested feasibility

How to remediate:

Find DORA minimum requirements and include them in your contracts with vendors

You already have the gradation of your business operations/functions. Now, cross-reference vendors using this gradation

Estimate transition timelines in case of exit, data portability, and dependencies

It’s always a good idea to invite critical vendors to join your crisis training

Supervisors usually want active and evidence-based oversight, just a text/document won’t close their audit.

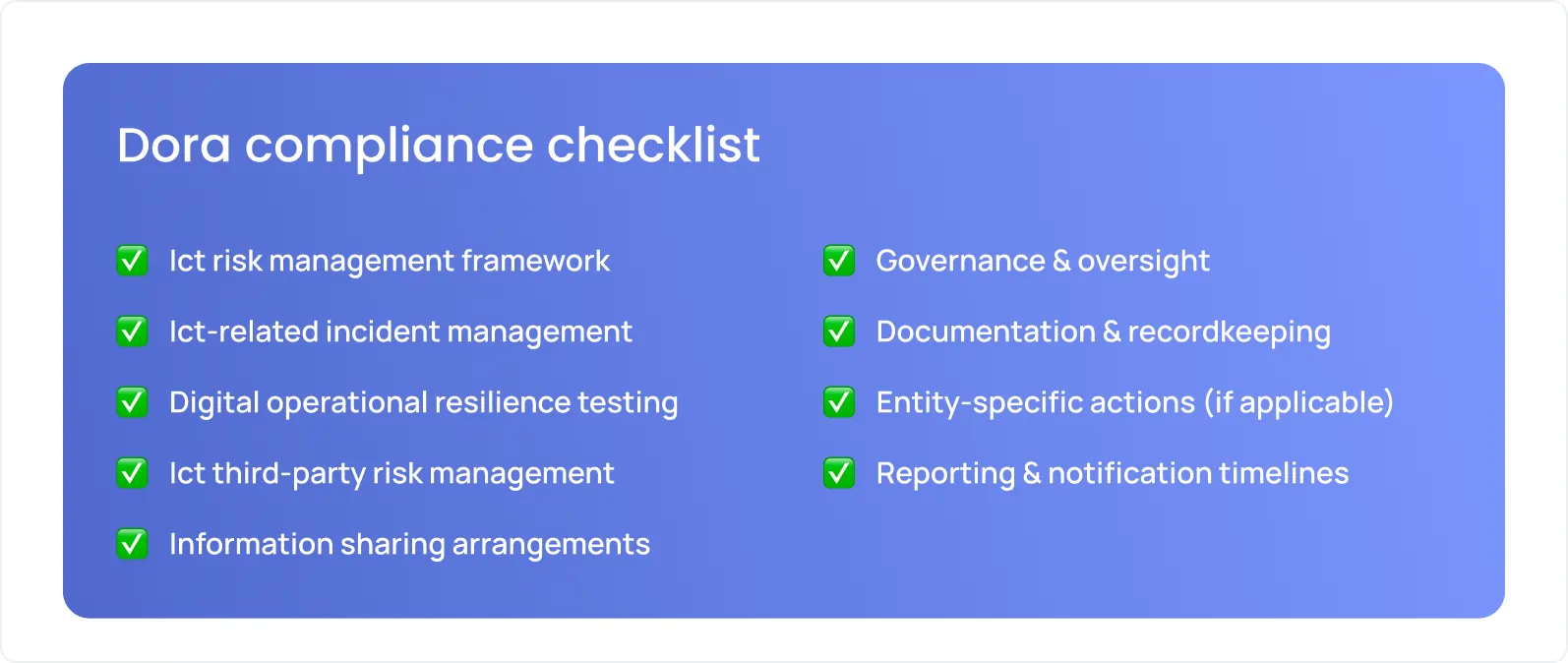

DORA compliance checklist

We tried to include all essential elements of the real audit into this checklist. So, basically, you can conditionally understand whether you’d complete a real one successfully. Yet, the devil is in the details, so if you are not sure about any listed evidence for any item, prioritize the deep-dive into it.

We grouped all items by pillars for your convenience.

Pillar 1. ICT risk management

Product Owner, CTO, and you identify and approve critical functions

Tech leader completes the asset inventory and assigns ownership

Tech + Law teams map the ICT risk register to services

You document change management controls

Teams conduct access reviews and retain the records

Dev and QA teams create a stored backup and restore test results

Teams introduce board-level ICT risk reporting

Evidence as proof:

Risk register export

Asset inventory snapshot

Restore test report (with timestamps!)

Access review log

Change approval samples

Pillar 2. ICT-related incident management and reporting

Incident classification matrix (aligned to RTS)

Reporting plan with timelines

Regulatory submission roles

You have an active post-incident review/AAR process

Evidence as proof:

Incident log with classification rationale

Submitted report copies

Internal escalation timeline records

Root cause analysis documents

Pillar 3. Digital operational resilience testing

Embed security and regression tests into daily builds

Schedule mandatory disaster recovery drills

Evidence as proof: Automated test execution logs, defect remediation tracking boards, penetration testing reports

Pillar 4. Third-party ICT risk management

Audit all vendor contracts for mandatory access and testing rights

Validate the technical feasibility of vendor exit strategies

Ensure DORA-aligned contract clauses in place

Ongoing monitor performance

Evidence as proof:

Vendor risk register

Contract clause mapping

SLA reports

Incident notification samples

Exit feasibility documentation

Pillar 5. Information sharing

Ensure you have secure channels for threat intelligence

Evidence as proof: Threat feed ingestion logs; internal security alerts triggered by external data

How DeviQA helps with DORA readiness

Policy and informational work with the team is just one of a complex system that helps ensure DORA compliance. A successful audit requires validation across engineering, testing, operations, and, in some sense, your operational/business maturity.

The DeviQA team treats DORA readiness as a delivery program, and our primary focus is the technical execution:

DORA gap assessment: Across all five pillars and overall maturity. We review your QA, security, and disaster recovery processes and develop 3 to 6 months remediation strategy.

Operational resilience testing: Two parts: (1) implementing measures so that your systems can survive and recover from real-world failures; and (2) properly documenting the results.

Security: End-to-end coordination of penetration testing, vulnerability scanning, IAM, authentication testing, and API security validation. Fine-tuning continuous regression testing.

Transiting to ongoing support: Your software and vendors will change. We cover that with regular resilience and recovery testing, DR and failover drills, and ongoing support during regulatory reviews.

A tested control environment, documented validation results, and a robust evidence set create confidence and strong theses your team can use during the audit.

In a strategic consultation, we can assess your current potential, giving your maturity, readiness, and specific processes.

Book a strategic QA consultation

About the author

Chief Technology Officer

Dmitry Reznik is the Chief Technology Officer and co-founder at DeviQA, bringing deep technical expertise across software architecture, implementation, and long-term system operation.