Platform rebuilds rarely break everything at once.

Quality degrades subtly, regressions creep in, data starts to drift, and familiar workflows behave just differently enough to cause doubt. In healthcare tech, users don’t report these issues. They lose trust and work around the system.

That’s why rebuilding isn’t only a technical challenge. It’s a quality preservation problem.

We saw this during our work with GoodShape, where rebuilding had to happen under live usage and real production data. The risk wasn’t platform stability, it was losing behavior users already relied on.

During rebuilds, maintaining behavior matters far more than shipping new code.

What really breaks during platform rebuilds

When teams talk about rebuild risks, they usually think in terms of features. What actually breaks during a rebuild is continuity.

The most damaging issues are not new bugs, but changes to behavior users silently depended on.

Regressions are the first signal.

Workflows that were stable for years start producing different results. Edge cases that were previously handled, sometimes unintentionally, disappear during re-implementation. Nothing is technically “broken,” but outcomes change.

Data drift follows.

The same underlying data produces different calculations or aggregations in the rebuilt system. Reports no longer align with historical results, raising questions that are difficult to answer and even harder to ignore.

Then come the behavior changes users didn’t ask for.

Small adjustments in workflows invalidate established habits. UI “improvements” increase friction instead of clarity. What looked like cleanup or optimization quietly becomes a trust issue.

In QA for healthcare platforms, this compounds quickly. Historical data isn’t archival, it drives decisions. Small inconsistencies don’t stay small. They echo forward, undermining confidence long before anyone labels them as defects.

Why feature-based testing fails during rebuilds

Feature-based testing is built on a simple assumption: changes are isolated.

That assumption breaks down during platform rebuilds. Rebuilds rarely introduce new functionality. They change how the system works under the hood, while the surface looks the same.

Scope

Specific feature-focused

Entire system or functionality

Objective

Validate individual features

Ensure complete system functionality

Granularity

Detailed and isolated

Broader and holistic

Examples

Testing the "Search" feature

Testing search combined with filtering and sorting

Dependency

Features can be tested in isolation

Requires integration and interaction of multiple components

When QA focuses only on feature validation:

individual screens pass

APIs respond correctly

new code meets its acceptance criteria

Yet the system as a whole behaves differently.

This is the critical failure mode of rebuild testing: “all tests pass,” while real user workflows are broken.

Feature-level testing can’t detect when:

a workflow produces the same result, but through a different path

timing or sequencing changes alter outcomes

integrations remain functional but no longer align with user expectations

During rebuilds, correctness isn’t about whether a feature works in isolation. It’s about whether the system behaves the same way users rely on.

That’s why QA must shift from feature testing to behavior validation, validating continuity, outcomes, and expectations across the system, not just individual components.

From feature testing to behavior validation

Rebuilds force QA to change mindset.

When architecture shifts, the primary question is no longer “Does this feature work?” but “Does the system still behave the way users depend on?” Behavior validation moves QA from validating implementation to protecting outcomes.

What behavior validation actually means

Behavior validation starts with one defining question: what must stay the same?

Instead of checking isolated endpoints or UI elements, QA validates:

complete workflows from start to outcome

rules and constraints applied across the system

results produced over time, not just in a single session

A critical practice during rebuilds is comparing legacy and rebuilt behavior. The goal isn’t to prove the new system works, but to confirm it behaves equivalently where equivalence matters.

This includes validating:

inputs that produce the same outputs

edge conditions that historically shaped user expectations

long-running scenarios that span days, weeks, or months

QA as the keeper of behavioral contracts

During rebuilds, QA becomes the keeper of behavioral contracts, the unwritten agreements between the system and its users.

These contracts define what cannot change, even when everything else does.

QA formalizes those contracts through:

documentation that captures business rules and exceptions

test cases that lock down expected behavior

regression coverage designed around workflows, not modules

In healthcare platforms, these contracts are not abstractions. They are concrete:

absence calculation rules

return-to-work logic

reporting consistency across historical data

When QA owns these non-negotiables, rebuilds stay safe. When they don’t, quality resets quietly, and trust is lost without warning.

Regression strategy matters more than velocity

During platform rebuilds, velocity becomes a misleading metric.

Shipping faster doesn’t mean you’re safer. In fact, speed often hides risk. Every rapid release amplifies the chance that subtle regressions slip through, especially when underlying logic is being rewritten and legacy behavior is only partially understood.

Why speed is the wrong success metric during rebuilds

Fast releases reward visible progress, not correctness.

When teams optimize for velocity during a rebuild:

regressions surface later, further from the change that caused them

confidence drops even as release frequency increases

teams start relying on post-release fixes instead of prevention

Velocity without confidence leads to a dangerous pattern: the platform moves forward faster while trust erodes quietly underneath. In healthcare systems, that trade-off is unacceptable.

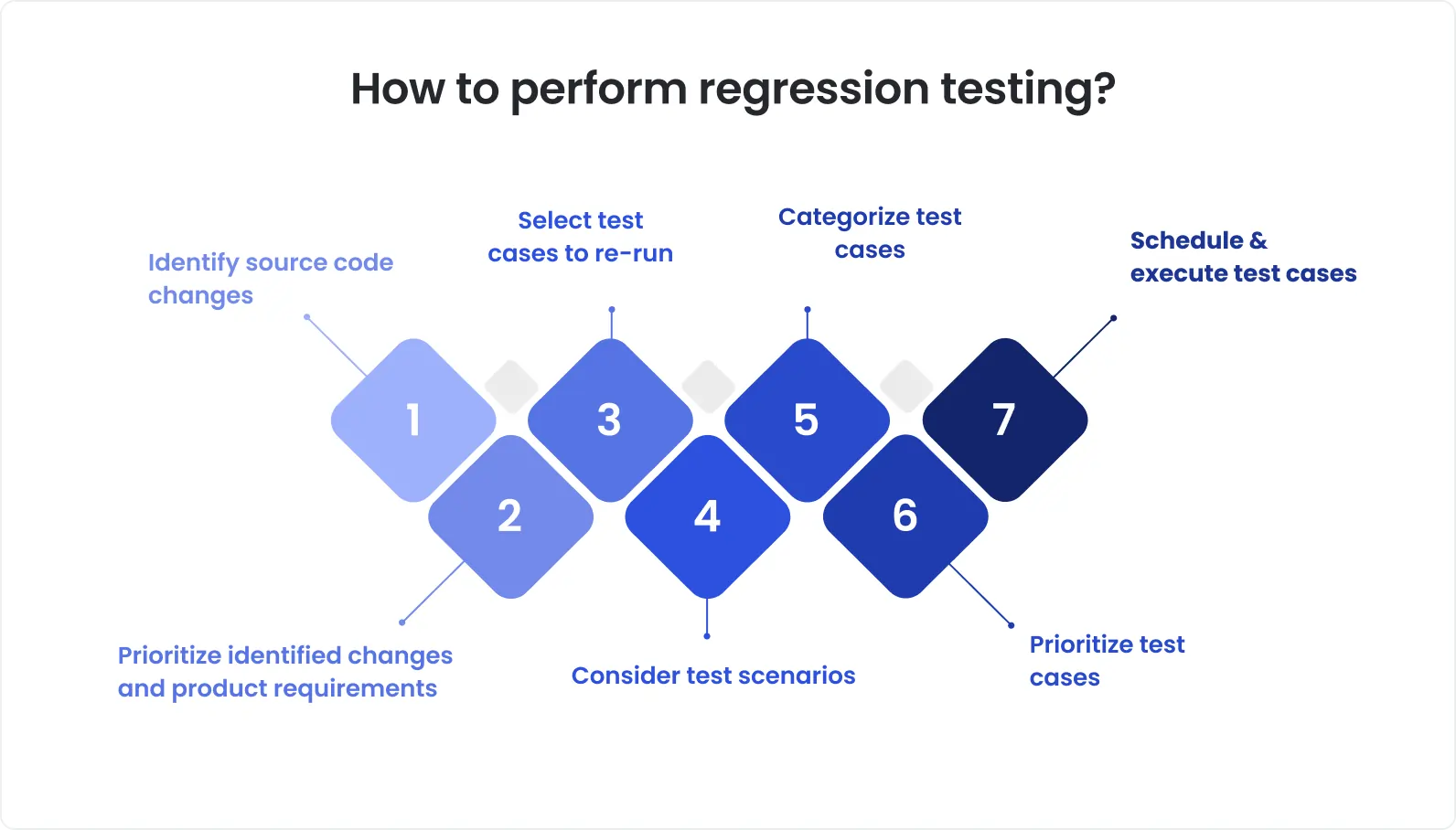

Designing regression around critical workflows

The purpose of regression testing during a rebuild is not coverage. It’s risk control.

Effective regression strategy starts by identifying what cannot break:

high-frequency workflows users rely on daily

high-risk logic tied to compliance, reporting, or decision-making

behaviors that have accumulated historical meaning over time

These paths must be locked down before the rebuild accelerates. Test cases, datasets, and expected outcomes become reference points against which every change is measured.

During migration, these workflows aren’t tested occasionally. They’re re-tested relentlessly, across releases, environments, and stages of the rebuild.

When regression is framed as risk control rather than QA overhead, it stops slowing teams down. It becomes the mechanism that allows rebuilds to proceed without breaking the product users already trust.

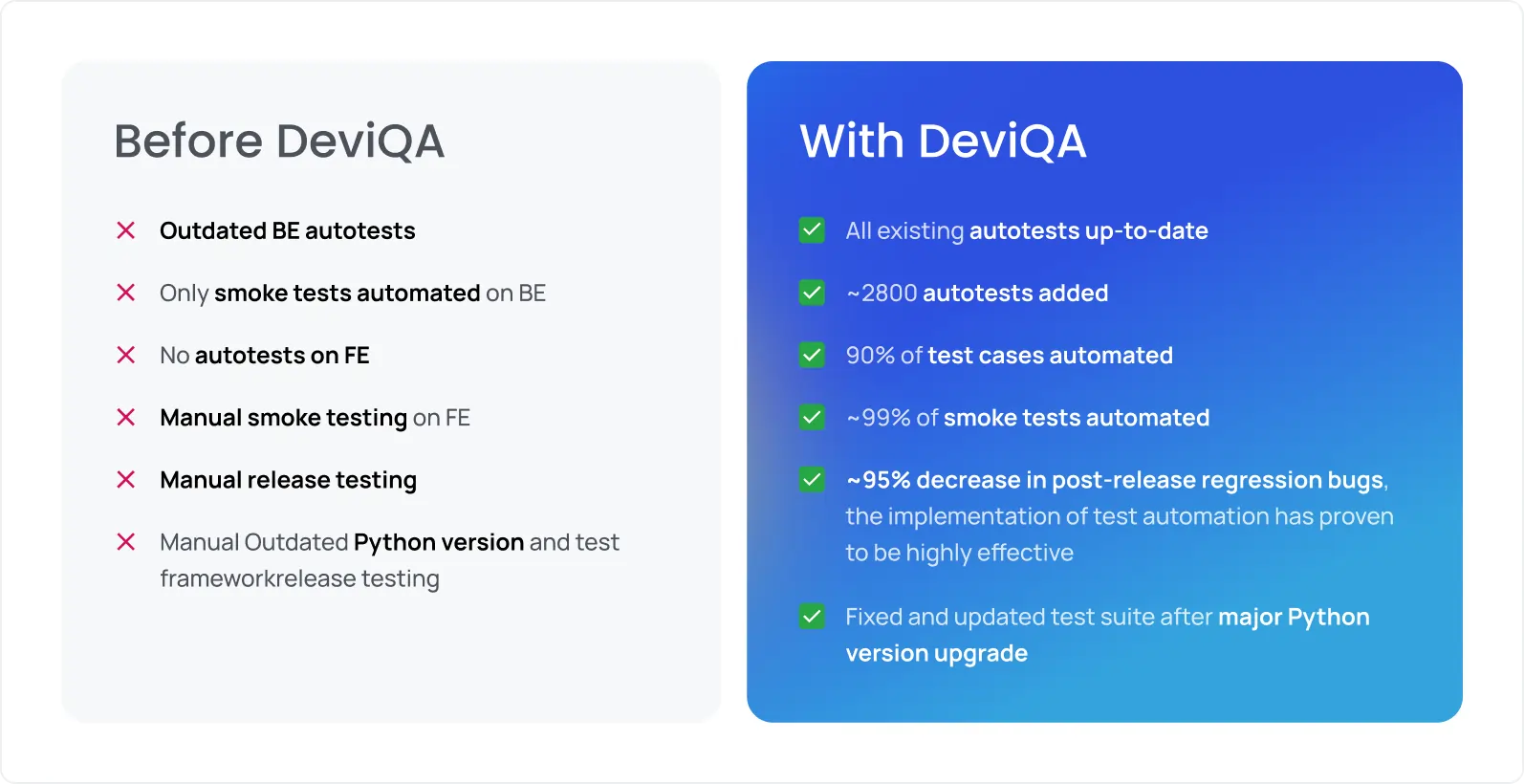

Rebuilding QA alongside the platform – The GoodShape experience

In a live platform rebuild, QA can’t lag behind architecture. It has to evolve in parallel.

That was the reality in the GoodShape rebuild. The platform was being modernized while actively supporting customers, existing data, and established workflows. Pausing delivery or “resetting” quality wasn’t an option.

To make the rebuild viable, QA itself had to be rebuilt.

The shift was deliberate:

From ad-hoc checks to a structured QA system that could survive architectural change

From feature verification to workflow validation, ensuring outcomes stayed consistent

From informal knowledge to documented behavior, so logic wasn’t lost during re-implementation

Concretely, this meant:

Formalizing documentation to preserve legacy behavior and business rules

Building a regression suite mapped to real absence-management and return-to-work workflows

Actively validating parity between old and new logic as components were migrated

QA wasn’t just checking the rebuilt platform, it was acting as the reference point for what “correct” meant.

The outcome was stability under change:

The rebuild progressed without quality resets

Releases remained predictable even as architecture evolved

Late-stage surprises decreased as risk moved upstream into QA

The important point is this: GoodShape is not an exception. The same pattern appears whenever live healthcare platforms are rebuilt successfully.

Rebuilds succeed when QA is treated as infrastructure, rebuilt alongside the platform, not bolted on after the fact.

To sum up

Re-architecture changes how a system is built. It must not change how the system behaves.

During rebuilds, code is temporary. Architecture is replaceable. Behavior is not. That continuity is what users trust, and what QA exists to protect.

This is where QA shifts from a supportive function to a strategic one.

QA provides stability when everything else is in motion:

it preserves expected outcomes as implementations change

it validates continuity across parallel systems and phased migrations

it anchors decisions when trade-offs arise between “cleaner code” and “safe behavior”

The real leverage comes from artifacts that outlive any single rebuild:

documentation that captures business logic and edge cases

test cases that define non-negotiable behavior

regression suites that continuously enforce those contracts

When architecture changes without these anchors, quality resets silently. When QA owns them, systems can evolve without breaking trust.

If your team is rebuilding a healthcare platform and needs QA that preserves behavior through change, DeviQA supports re-architecture projects with structured regression, behavior validation, and long-term QA ownership.