Mental-health software rarely fails loudly.

Quality issues don’t show up as outages. They show up as doubt, inconsistent data, unclear flows, reports clinicians stop trusting. Users don’t complain. They disengage.

In this space, trust is the product. And most QA processes aren’t built to protect it at scale.

Based on insights from our work on real mental-health platforms, this article outlines how to design a sustainable QA process for mental-health software, one that supports long-term care, evolving teams, and growing product complexity.

Why mental-health software requires a different QA approach

Industry data shows that over 50–70% of users abandon mental-health apps within the first month, often without a single reported bug. The reason isn’t missing features or performance outages, but gaps in quality assurance for healthcare software. It's a loss of confidence: unclear flows, inconsistent data, and moments where the product stops feeling reliable in emotionally sensitive situations.

In mental health software testing, quality is defined by three core factors.

Trust, users must believe their data is accurate and handled consistently

Continuity, progress, history, and context must persist flawlessly over time

Consistency, experiences must remain predictable across sessions, devices, and updates

Break any one of these, and engagement drops, silently.

That’s why QA in mental-health software plays a fundamentally different role. Its function goes beyond defect detection to risk prevention:

Preventing subtle regressions that undermine clinical confidence

Protecting longitudinal data integrity as features evolve

Identifying UX friction that test automation tools often miss

Ensuring behavioral consistency, not just functional correctness

In practice, this means QA can’t be introduced late or treated as a release-phase activity. It must operate as a risk-management layer, continuously validating that the product remains safe, predictable, and trustworthy as it grows.

For mental-health platforms, the absence of bugs isn’t enough. The measure of quality is whether users continue to trust the system without thinking about it at all.

Common QA failure patterns in mental-health platforms

Most quality breakdowns in mental-health software aren’t caused by poor engineering, but by immature healthcare software QA practices. They come from QA processes that never evolved beyond the MVP stage.

What works for early validation in mental health app testing quietly becomes a liability as the product matures.

The most common patterns we see:

1. MVP-level testing stretched too far

Early-stage test coverage focuses on “does this flow work at all?” As features multiply and user journeys lengthen, those checks stop capturing long-term behavior, edge cases, and data consistency across time.

2. Ad-hoc processes and undocumented behavior

Critical logic lives in developers’ context, past Slack threads, or release memory. QA depends on what people remember rather than what systems enforce. Over time, this leads to inconsistent expectations of how the product should behave.

3. Late-stage bug discovery before releases

Without structured coverage, issues surface during final validation, when they’re most expensive to fix. In healthcare-related software, this often triggers release delays, rushed hotfixes, or risk-acceptance decisions leadership shouldn’t have to make.

4. Over-reliance on fast fixes instead of systems

Quick patches may resolve isolated issues but don’t address root causes. Each fix increases process complexity while lowering overall confidence, creating a cycle of firefighting rather than improvement.

The result is predictable: releases slow down, regressions reappear, and quality becomes reactive.

Sustainability starts with structure

QA that works only in early-stage delivery isn’t sustainable QA.

To survive scale, quality must be designed to outlast releases, people, and feature growth.

In mental-health platforms, the biggest threat to quality isn’t new functionality, it’s loss of product understanding over time. Teams rotate. Context fades. Features evolve. Without structure, QA silently degrades.

That’s where documentation becomes a core asset in healthcare QA services, not overhead.

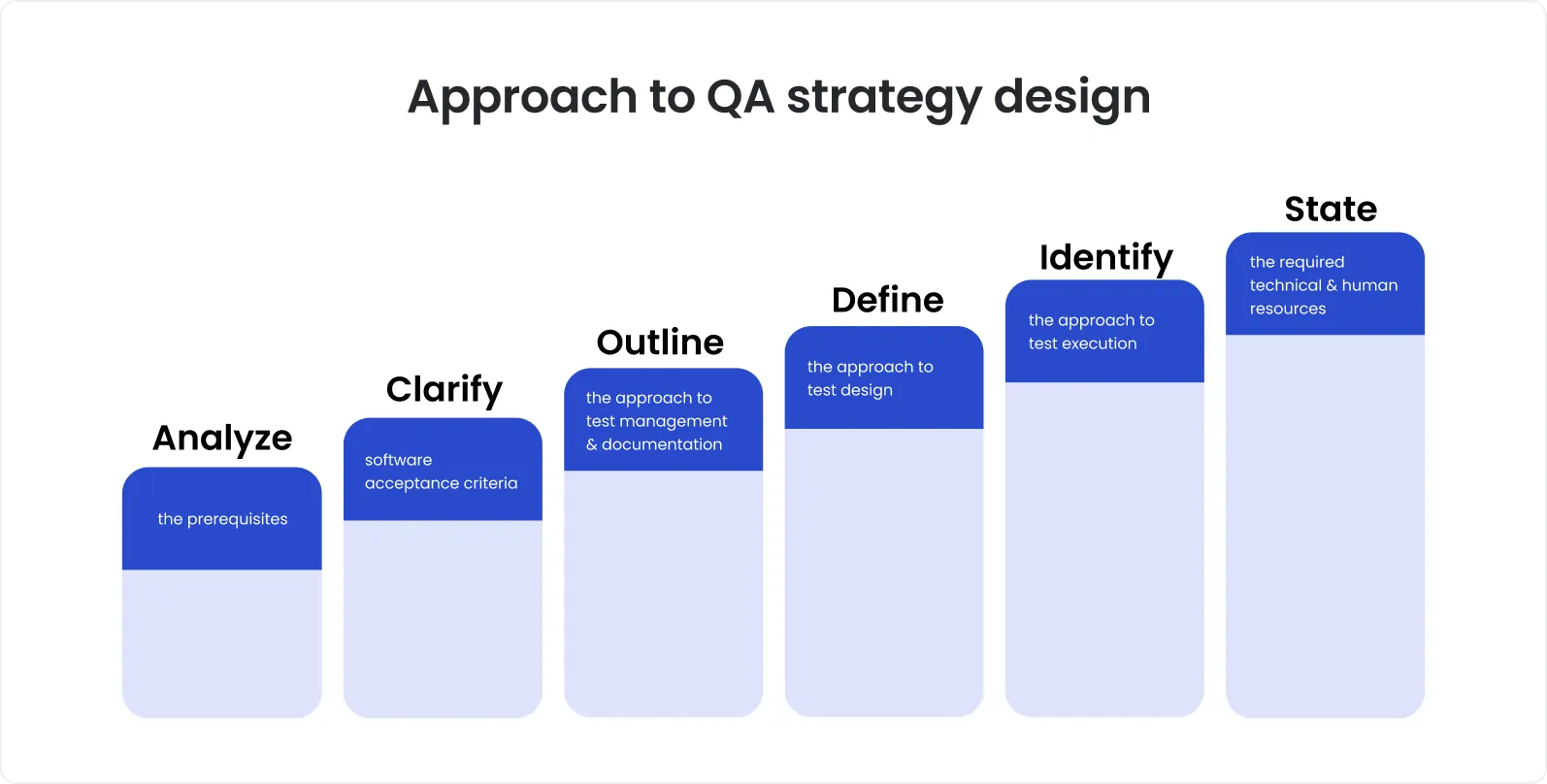

Sustainable QA is built on three structural pillars:

Functional specifications. Clear definitions of how features are supposed to behave, not just what they do. In mental-health software, this includes edge cases, state transitions, and long-term data behavior.

Acceptance criteria. Shared rules that define “done” from both a clinical and user perspective. These criteria anchor testing to outcomes, not interpretations.

Traceable test cases. Test coverage mapped directly to specs and workflows, making it possible to understand what breaks when behavior changes, and why.

We saw the impact of this shift clearly in our work on Catalyst, a mental-health platform that had outgrown informal QA. Stability only improved after product behavior was formalized into structured documentation and test coverage. Once knowledge moved from individuals into systems, QA became predictable, and scalable.

Testing real patient and clinician flows

Mental-health platforms are rarely single-surface products. They combine patient-facing mobile apps, clinician web dashboards, and reporting layers that support long-term decision-making. Most quality issues don’t live inside features, they live between them.

This is where many mental health app testing strategies fail.

Screen-based testing verifies that individual UI elements work. It does not verify that the system behaves consistently over time, across roles, and across devices.

Effective QA in mental-health software shifts focus to end-to-end workflows, including:

1. Patient journeys

entering data over multiple sessions

pausing and resuming usage

interacting during emotionally sensitive moments

2. Clinician workflows

reviewing longitudinal data

relying on reports and trends

making decisions based on historical accuracy

3. System continuity

data persistence across devices

synchronization between mobile and web

consistency after updates and releases

Trust breaks when these workflows drift out of alignment, even if each screen technically works.

Workflow-based testing exposes gaps that UI checks miss: delayed updates, misaligned calculations, incomplete history, or contradictory views of the same data. These issues rarely register as bugs, but they directly undermine confidence.

Manual QA as the quality baseline

In mental health software testing, manual QA is not a temporary step on the way to full test automation. It is the foundation that protects user trust.

Emotionally sensitive products demand judgment that tools can’t replicate. Exploratory testing surfaces issues that never appear in scripted scenarios, flows that feel confusing, copy that lands poorly, or behavior that is technically correct but contextually wrong for users in vulnerable moments.

Manual QA is critical for identifying:

UX and copy issues that affect emotional safety and clarity

Behavioral edge cases caused by irregular, real-world usage

Continuity problems that only appear across multiple sessions

This becomes even more important in long-running platforms, where deep product knowledge is a quality multiplier. QA engineers who stay with a product over time recognize patterns, fragile areas, and historical risk zones that new tools or rotating teams would miss.

We saw this clearly in our work on Catalyst. Dedicated manual QA, maintained over years, enabled the team to consistently catch trust-breaking issues before release and to define which workflows were truly stable enough for test automation.

This is the key distinction: in mental-health products, manual QA doesn’t slow teams down. It defines what should, and should not, be automated, ensuring test automation strengthens quality rather than undermines it.

Test automation that supports stability

Test automation in mental-health software testing should exist for one reason: to protect stability over time. Not to boost test counts. Not to impress dashboards.

The most effective test automation targets what must never break:

Core regression paths that underpin daily use

Data-heavy workflows where manual rechecking is error-prone

Reporting and calculation logic clinicians rely on for decisions

Problems start when test automation is scaled without ownership.

High-volume, low-ownership test automation is a common failure pattern in healthcare software QA:

tests pass for the wrong reasons

failures are ignored or muted

maintenance cost quietly rises until confidence drops

At that point, test automation stops acting as a safety net and becomes technical debt.

Sustainable test automation is deliberately constrained:

limited in scope

tightly aligned to documented behavior

owned by people who understand the product, not just the framework

This approach maintains resilience as the platform evolves.

In practice, success is not measured by how many tests exist, but by how confidently teams ship. When test automation consistently answers one question, “Is the product still safe to release?”, it’s doing its job.

Release discipline as part of QA design

In mental-health software testing, releases are not just technical events. They are risk events.

Without release discipline, even strong test coverage can fail to prevent last-minute surprises. Bugs surface late, fixes get rushed, and teams are forced to choose between delay and risk, a decision that shouldn’t happen in high-trust products.

Well-designed QA treats release readiness as part of the system, not an afterthought.

That starts with mandatory smoke testing before every release:

verifying core patient flows

confirming data persistence and sync

ensuring clinician-facing reports behave as expected

Regression testing must also be predictable, not heroic. Time-boxed cycles, consistent scope, and clear ownership reduce uncertainty and prevent testing from expanding uncontrollably as the product grows.

Just as important is early QA involvement. When QA participates in release planning, not just validation, teams catch risks earlier, prioritize testing effectively, and avoid late-stage rewrites.

The payoff is simple but powerful: predictable, repeatable releases. When releases stop being stress tests for the team, quality has moved from reactive control to designed resilience.

Example from practice: Catalyst case study

Sustainability in QA becomes visible when a product stops relying on individual effort and starts relying on systems.

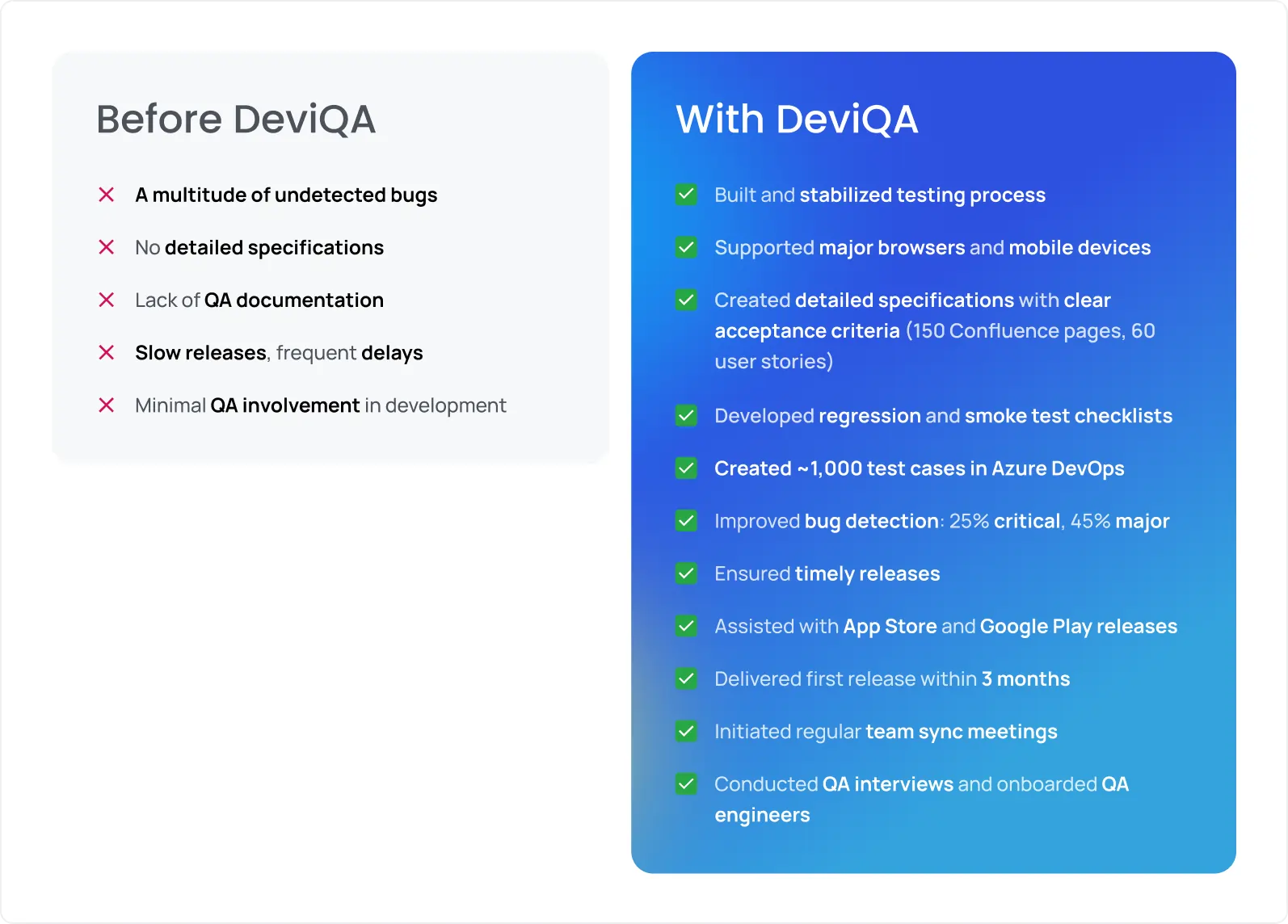

We saw this transition clearly in our work on Catalyst, a mental-health platform that had moved beyond early-stage delivery. Initially, quality depended on informal checks and team context, a model that worked early on, but began to strain as the product and usage grew.

Stability improved only after QA was redesigned as a structured healthcare software QA function.

The QA team created ~ 150 Confluence pages to formalize product behavior, requirements, and edge cases.

They built about 1,000 test cases in Azure DevOps, mapping testing to real workflows rather than ad-hoc checks.

Testing shifted from patchwork to end-to-end: covering both the mobile app (for patients) and web portal (for clinicians), including data sync, reporting, and cross-device/browser coverage.

The result wasn’t just fewer defects. Releases became predictable. Regressions dropped. The team stopped discovering critical issues late in the cycle, QA transformed from reactive firefighting into a stable system.

The important point is that the Catalyst story is not a one-off. This pattern repeats across mental-health platforms that succeed long-term. Sustainable QA emerges not from testing harder, but from designing quality as infrastructure, independent of people, timelines, or release frequency.

This is the difference between QA that occasionally “works,” and QA that scales reliably with product growth.

Designing QA as a long-term function

Sustainable QA is not something a team “sets up” and moves on from. It matures over years, not sprints.

Mental-health products are long-lived by nature. User history, clinical insight, and trust accumulate over time, and QA must evolve at the same pace. Short-term QA bursts may reduce visible defects, but they don’t build the institutional knowledge or discipline required to protect quality as complexity grows.

This is where ownership becomes decisive.

Continuous ownership allows QA to internalize product logic, historical risk areas, and behavioral nuances.

Short-term or rotating QA efforts reset context repeatedly, increasing regression risk and reintroducing previously solved problems.

Over time, stable QA processes deliver compounding returns:

fewer repeat defects

faster, more predictable releases

clearer impact analysis when changes are planned

lower cost of validation per release

The opposite is also true. Quality debt compounds alongside technical debt. Each undocumented decision, skipped test, or rushed release increases the friction of future development. As growth accelerates, that hidden debt turns into delays, rework, and rising risk tolerance, especially dangerous in mental-health software.

Designing QA as a long-term function is not about heavier process. It’s about ensuring that quality becomes an asset that strengthens the product as it scales, rather than a constraint teams struggle to keep up with.

Key takeaways for mental-health product teams

Design QA as infrastructure, quality must scale with the product, not depend on individuals.

Document while knowledge is fresh, context evaporates faster than teams expect.

Test behavior first, features second, trust breaks in workflows, not screens.

Align manual and automated testing, test automation should reinforce understanding, not replace it.

Prioritize sustainability over speed, predictable quality enables long-term growth.

If you’re building or scaling a mental-health product and need QA that holds up over time, DeviQA works with healthcare teams to design sustainable testing frameworks, from structured processes to long-term QA ownership.