Written by: Chief Technology Officer

Dmitry ReznikPosted: 26.02.2026

8 min read

During the rapid growth of digital mental-health platforms post-2020, many teams learned the same lesson the hard way: UX regressions hurt retention faster than functional bugs. When products are used in emotionally sensitive contexts, even small quality slips uncovered too late in mental-health software testing erode trust.

This places unique pressure on QA as wellbeing apps scale. Test coverage that worked at MVP stage quickly becomes inadequate, not because features are complex, but because usage is.

Based on hands-on work with Thrive, this article outlines how QA maturity and mental-health app testing practices must evolve as products move from early growth to scale.

Why scaling QA is especially hard for mental-health apps

Mental-health apps don’t fail like e-commerce or fintech, and the data is clear on that.

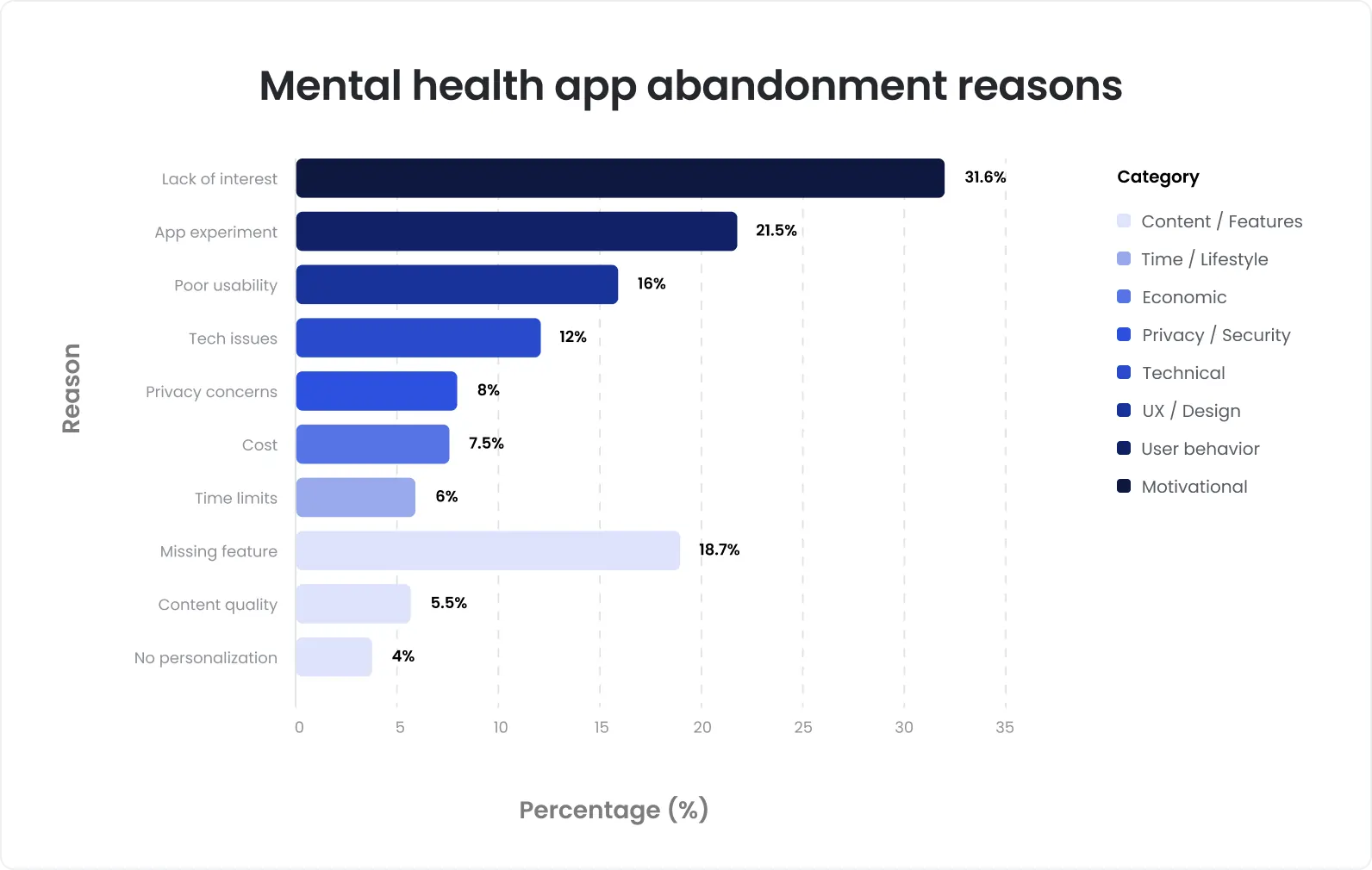

Studies show that over 50–70% of mental-health app users drop off within the first month, often without reporting a single defect. The primary drivers aren’t missing features or performance outages, they’re UX friction, confusing flows, and loss of trust at critical moments.

When users interact in emotionally sensitive states, tolerance for inconsistency in mental-health app UX testing is extremely low:

A delayed response during a check-in

A flow that suddenly changes after an update

An unclear or inconsistent UI decision

These don’t register as “bugs” in analytics dashboards. They register as disengagement.

This reshapes the quality equation. In mental-health products, UX reliability often has a greater impact on retention than feature depth. Research across digital wellbeing platforms shows that users will stay with simpler apps if interactions feel stable, predictable, and supportive, and abandon more advanced solutions when experience becomes unreliable.

As usage grows, lightweight QA approaches in mental-health software testing start to break:

The number of user journeys increases exponentially with daily engagement patterns

Client-facing and admin tools evolve simultaneously

Frequent small releases introduce silent regressions

Manual, ad-hoc testing becomes inconsistent and hard to repeat

Industry benchmarks indicate that once a product exceeds hundreds of weekly interactions per user, informal regression checks no longer scale. Coverage gaps appear, not because teams stop testing, but because volume outpaces structure.

Quality where emotional safety matters

What Thrive looked like before scalable QA

Before QA was scaled deliberately, Thrive was in a phase many growing wellbeing platforms recognize.

The product was expanding quickly, with client-facing applications and an admin platform evolving in parallel. New features and UX improvements were shipping regularly to support growth and engagement.

QA was present, but not yet structured for scale:

Test documentation existed, but coverage was partial and uneven

Regression checks relied heavily on manual effort and individual testers’ context

Release validation worked, but was difficult to repeat consistently

Confidence in releases depended more on experience than on evidence

Nothing was “broken” in an obvious way. But as the product grew, predictability started to erode. Each release carried more risk than the last, simply because it became harder to know what had been fully validated.

This stage is common for mental-health and wellbeing products transitioning beyond MVP. QA exists, effort is high, but structure hasn’t caught up with scale, and release confidence starts to lag behind product velocity.

The first scaling problem: Volume, not complexity

One of the clearest lessons from Thrive was this: QA for the mental-health app didn’t break because the product became complex, but because test volume exploded.

Features themselves stayed understandable. What changed was volume.

As the platform grew, QA had to account for:

Thousands of user journeys, driven by high-frequency daily interactions

Multiple roles and permission levels across client and admin experiences

Frequent, incremental releases, where small changes quietly affected existing flows

Each individual update looked safe. Collectively, they created uncertainty.

Without structure, QA effort stopped scaling. Manual checks covered less ground with each release, regressions became harder to predict, and confidence degraded even when defect counts didn’t spike.

At this point, adding more effort didn’t solve the problem. Only better coverage design did. QA needed to shift from “checking changes” to systematically protecting core journeys, across users, roles, and releases.

This was the moment where scaling QA stopped being optional and started being operationally critical.

Quality assurance that protects the user experience

How QA coverage was rebuilt for scale

Scaling QA at Thrive started with a reset, not adding more tests, but rebuilding coverage intentionally around how the product was actually used.

The first step was reviewing and restructuring existing test cases. Fragmented, outdated, and overlapping scenarios were consolidated into clear, role-based coverage. This turned scattered knowledge into controlled documentation that could scale.

Next, QA was split deliberately across the product:

Full regression suites for client-facing applications, covering core user journeys and high-frequency interactions

Dedicated regression coverage for the admin panel, where configuration, permissions, and data control introduced a different risk profile

Structure replaced ad-hoc checks:

Consistent smoke tests validated critical paths on every release

Sanity checks ensured changes didn’t ripple into unrelated areas

Regression became repeatable, not effort-dependent

From the Thrive case:

6,000+ structured test cases covered client-facing user flows

1,500+ test cases protected admin-side functionality

Manual QA wasn’t removed, it was systematized. Coverage was designed to grow with the product, not fight it.

The result wasn’t just more testing. It was predictable release validation, where teams knew exactly what was protected, and where risk actually lived.

Why mental-health apps need strong manual QA longer than expected

One of the less obvious lessons from Thrive was this: automation alone wasn’t enough, and shouldn’t be.

In mental-health apps, many of the most important failure points aren’t technical edge cases. They’re UX nuances:

Tone and clarity of prompts

Flow continuity between screens

The emotional pacing of interactions

How friction shows up during vulnerable moments

These are areas where automation has limits. While automated tests are excellent at protecting logic, data, and repeatability, behavioral flows are still difficult to evaluate without human judgment.

At Thrive, human review remained critical for:

First-time user experiences

Daily check-ins and reflective flows

Transitions between client and admin-driven changes

Small UX shifts that could alter user perception

Manual QA wasn’t treated as something to phase out. It was scaled and systematized:

Clear exploratory scopes defined where human focus added the most value

Structured regression ensured manual testing wasn’t repetitive or chaotic

Test documentation evolved alongside product changes

The result was balance. Automation handled volume and stability. Manual QA protected empathy, clarity, and trust, the qualities mental-health apps depend on most.

Talk to QA experts who understand mental-health products

Release stability as the real KPI

As QA maturity improved at Thrive, the focus shifted away from raw bug counts. The real signal of quality wasn’t how many issues were found, it was how predictable each release became.

Success started to look different:

Release cycles became reliable, with fewer last-minute delays

Critical production issues dropped, especially in user-facing flows

Teams shipped with confidence, knowing what was covered and why

QA stopped reacting to problems and started preventing them. Validation became structured, repeatable, and aligned with how the product actually changed release to release.

Most importantly, QA no longer blocked delivery. It stabilized it.

At this stage, quality wasn’t measured by effort or volume.

It was measured by how calmly the product could move forward.

The value of a long-term QA partnership

Another key insight from Thrive came with time: QA delivers the most value when it’s embedded, not episodic.

QA engineers worked as part of the product team, developing deep context around user behavior, release patterns, and risk areas. This reduced handoffs, surfaced issues earlier, and improved decision-making long before testing phases began.

Test documentation stopped being static. It became living coverage, evolving with features, UX changes, and new usage patterns. What mattered was always current, and what no longer mattered was removed.

Most importantly, QA scaled alongside development, not after incidents or regressions. Coverage expanded as the product did, rather than scrambling to catch up post-release.

This shifted QA from a phase at the end of the cycle into a system that continuously protected release stability, exactly what growing mental-health platforms need as complexity and responsibility increase.

Key lessons for mental-health product teams

Teams working on mental-health products rarely ignore QA, they delay scaling it. The Thrive experience shows why that’s risky.

QA must scale earlier than teams expect. Once usage grows, informal testing stops keeping up.

UX quality is business-critical in mental health. Small experience regressions drive churn faster than missing features.

Structured regression reduces long-term cost. Clear coverage protects core journeys and stabilizes releases.

Strong QA partnerships outperform reactive testing models. Embedded QA creates continuity, context, and predictability.

If your mental-health or wellbeing product is growing and QA is starting to feel fragile rather than reliable, this is the right moment to act.

At DeviQA, we help mental-health platforms scale quality deliberately, before trust erodes and releases become unpredictable.

Talk to our QA experts to review where your coverage needs structure next.

If trust matters, let’s talk quality

About the author

Chief Technology Officer

Dmitry Reznik is the Chief Technology Officer and co-founder at DeviQA, bringing deep technical expertise across software architecture, implementation, and long-term system operation.