Written by: Chief Operating Officer

Anastasiia SokolinskaPosted: 14.11.2016

5 min read

First web services were created to provide information for web users. Then the new services appeared that allowed the owners of web services to predict the load capacity. And with the help of these services, hackers came up with a way to get any web application overloaded.

Any web project, whether it is a weblog or a web application, has such an important characteristic of its performance as a "full load." This metric comes handy when the web application partially or completely refuses to fulfill queries or processing requests from users.

For some of the owners, this may mean the loss of visitors that read it regularly, and for someone, it can be a loss of customers who decided to buy a product on the competitor's website. A distributed service attack is not always the reason for crashes. It's all about that every web resource has a limit for the number of users processed. This fact has forced the developers and owners of web applications pay particular attention to the procedure for testing web services manually.

Software is a service

Desktop applications gradually go into the clouds, and the browser is a must to any self-respecting Internet user. The concept of "software as a service" makes our lives easier. No need to bother installing applications and wasting gigabytes of your hard drive. Now we are not tied to a particular workstation with a fixed set of software. Having any device, your web browser may turn into a Photoshop, IDE, notepad or many other applications.

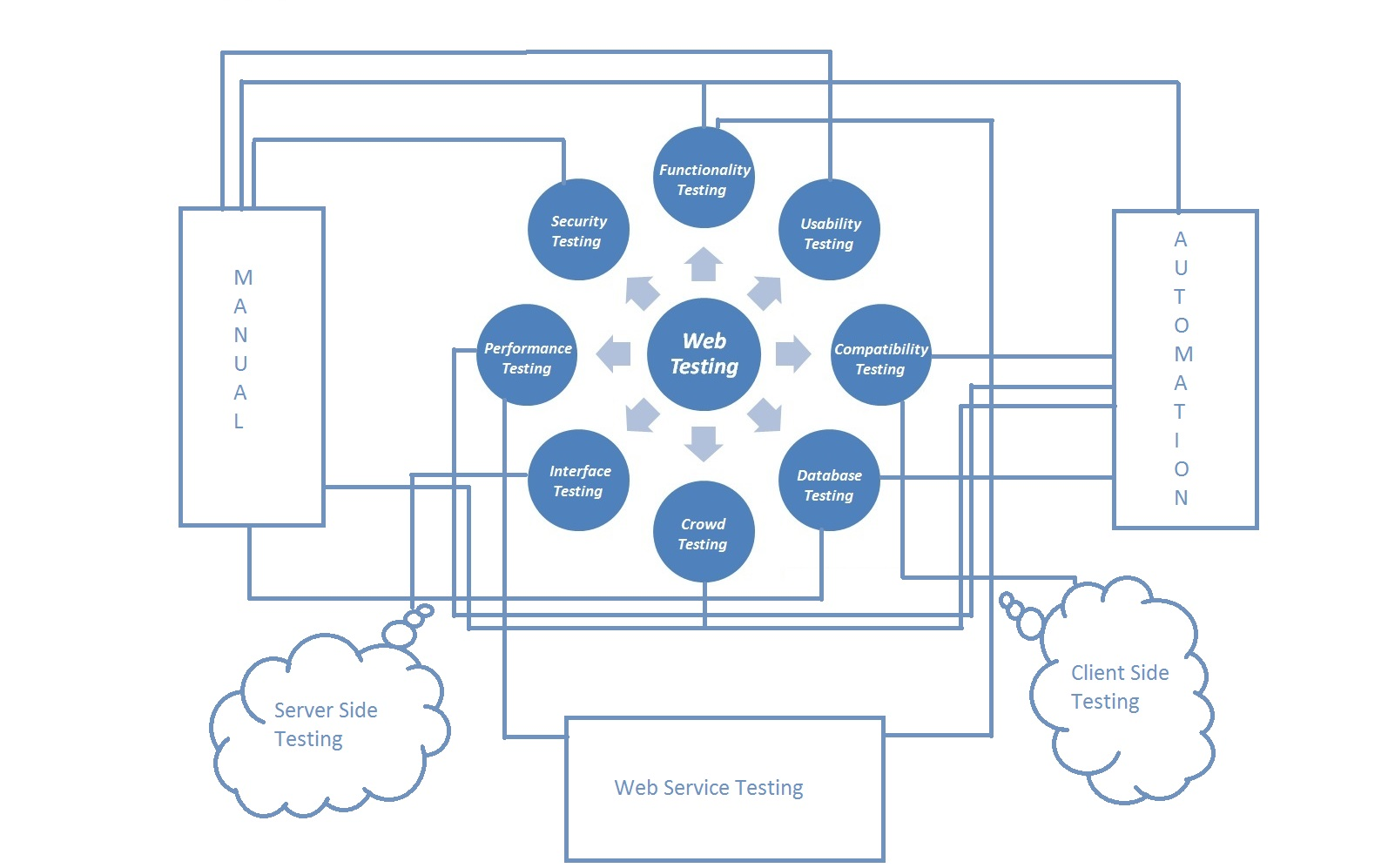

How to test web services manually?

The most common approach is called Capture & Playback (other names - Record & Playback, Capture & Replay). The essence of this approach is that the checkup cases are based on the user experience with the test application. The tool captures and records user actions, the result of each action is also stored and serves as a benchmark for future audits.

In the majority of tools that implement this approach, the impact (e.g., mouse click) does not communicate with the coordinates of the current mouse position, and with the HTML-interface objects (buttons, input fields, etc.), on which the impact occurs, and their attributes.

When testing tool automatically reproduces previously recorded actions and compares the results with the reference, you can adjust the comparing accuracy. It is also possible to add additional verification as to set conditions on the properties of objects (color, location, size, etc.) or application functionality (message content, etc.).

All commercial testing tools based on this approach, store the recorded actions and the expected results in some internal representations. Access to them can be obtained using either a common programming language (Java in Solex), or your own instrument language (4Test in SilkTest by Segue, SQABasic in Rational Robot by IBM, TSL WinRunner by Mercury). In addition to the UI elements, tools can handle HTTP-requests (Solex), the sequence of which can also be recorded with the user activities, and then modified and reproduced.

Advantages and Disadvantages of Manual Testing

The main advantage of this approach is the ease of development. You can create such assessments without programming skills. However, some approaches have significant drawbacks. There's no test automation available. In fact, the tool records manual testing process.

If an error is detected in the recording process, in most cases, creating a test for later use is not possible until the error is corrected (the tool has to remember to check the correct result). If you change the application, the test suite's hard to maintain up to date, as the tests for the changed parts of the application have to re-record.

Another advantage of this approach is that it lets you to create tests without waiting for the application release, guided by the requirements and interface design. Created checkups can be used for automated and manual testing.

The main drawback of this approach is a lack of automation of a test development process. In particular, all the patterns are developed manually, which leads to problems at the development stage, and after that. These problems are especially acute when testing web services with a complex interface.

Any Examples?

Formally load testing procedure is a part of the performance test method, which also includes stress testing. In turn, stress testing is the evaluation of the behavior of the target system with a load that goes beyond acceptable values. A particular example of stress testing is a DDoS-attack on system components.

For example, we know that our blog can simultaneously sustain 1,000 users. We are beginning to assess and simulate user activity of 50, then 100 and finally 900 people. We are doing a load testing. Then we decide to check what happens if it will be read by 1050 users at once, which means that we have begun a stress test procedure.

Performance on the Web scene

The goal: create a controlled load on the service, which is also expected to exceed the current limit value (unless we do not want to engage in forecasting point of failure of our service). The solution: easy as pie. You can ask the owner of any web service to redirect their users to your site. In this case, you need to find a kind owner with a high number of sessions that significantly exceeds the figures of your site.

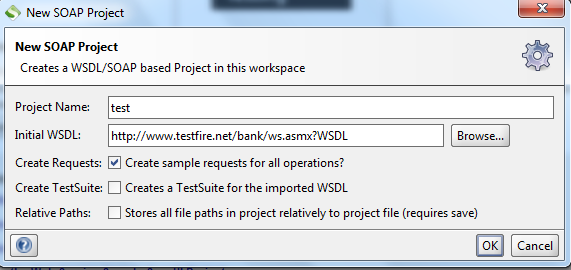

You can configure a standalone application like Apache JMeter: write the script about the users' behavior for it and send it to wander through our web application. However, the imitation of hundreds of users from the localhost will hardly create a significant load on more or less severe applications. In this case, it is better to scatter the script on several machines, or use of cloud IaaS-platforms like Amazon EC2.

About the author

Chief Operating Officer

Anastasiia Sokolinska is the Chief Operating Officer at DeviQA, responsible for operational strategy, delivery performance, and scaling QA services for complex software products.